Some Quick Gaming Numbers at 4K, Max Settings

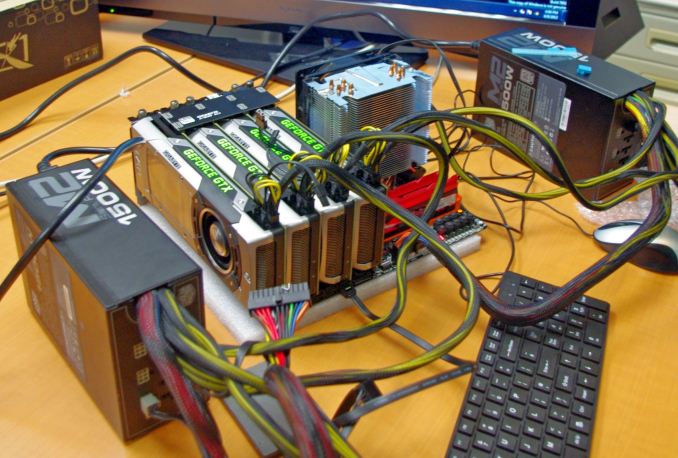

by Ian Cutress on July 1, 2013 8:00 AM ESTPart of my extra-curricular testing post Computex this year put me in the hands of a Sharp 4K30 monitor for three days and with a variety of AMD and NVIDIA GPUs on an overclocked Haswell system. With my test-bed SSD at hand and limited time, I was able to test my normal motherboard gaming benchmark suite at this crazy resolution (3840x2160) for several GPU combinations. Many thanks to GIGABYTE for this brief but eye-opening opportunity.

The test setup is as follows:

Intel Core i7-4770K @ 4.2 GHz, High Performance Mode

Corsair Vengeance Pro 2x8GB DDR3-2800 11-14-14

GIGABYTE Z87X-OC Force (PLX 8747 enabled)

2x GIGABYTE 1200W PSU

Windows 7 64-bit SP1

Drivers: GeForce 320.18 WHQL / Catalyst 13.6 Beta

GPUs:

| NVIDIA | ||||||

|---|---|---|---|---|---|---|

| GPU | Model | Cores / SPs | MHz | Memory Size | MHz | Memory Bus |

| GTX Titan | GV-NTITAN-6GD-B | 2688 | 837 | 6 GB | 1500 | 384-bit |

| GTX 690 | GV-N690D5-4GD-B | 2x1536 | 915 | 2 x 2GB | 1500 | 2x256-bit |

| GTX 680 | GV-N680D5-2GD-B | 1536 | 1006 | 2 GB | 1500 | 256-bit |

| GTX 660 Ti | GV-N66TOC-2GD | 1344 | 1032 | 2 GB | 1500 | 192-bit |

| AMD | ||||||

| GPU | Model | Cores / SPs | MHz | Memory Size | MHz | Memory Bus |

| HD 7990 | GV-R799D5-6GD-B | 2x2048 | 950 | 2 x 3GB | 1500 | 2x384-bit |

| HD 7950 | GV-R795WF3-3GD | 1792 | 900 | 3GB | 1250 | 384-bit |

| HD 7790 | GV-R779OC-2GD | 896 | 1075 | 2GB | 1500 | 128-bit |

For some of these GPUs we had several of the same model at hand to test. As a result, we tested from one GTX Titan to four, 1x GTX 690, 1x and 2x GTX 680, 1x 660Ti, 1x 7990, 1x and 3x 7950, and 1x 7790. There were several more groups of GPUs available, but alas we did not have time. Also for the time being we are not doing any GPU analysis on many multi-AMD setups, which we know can have issues – as I have not got to grips with FCAT personally I thought it would be more beneficial to run numbers over learning new testing procedures.

Games:

As I only had my motherboard gaming tests available and little time to download fresh ones (you would be surprised at how slow in general Taiwan internet can be, especially during working hours), we have a standard array of Metro 2033, Dirt 3 and Sleeping Dogs. Each one was run at 3840x2160 and maximum settings in our standard Gaming CPU procedures (maximum settings as the benchmark GUI allows).

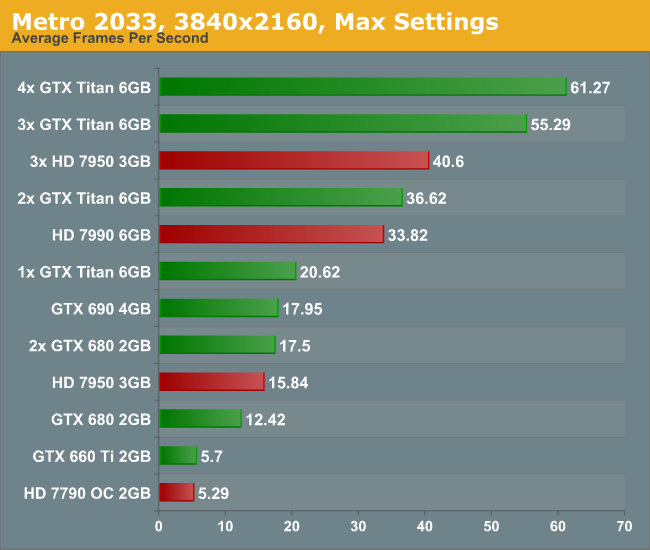

Metro 2033, Max Settings, 3840x2160:

Straight off the bat is a bit of a shocker – to get 60 FPS we need FOUR Titans. Three 7950s performed at 40 FPS, though there was plenty of microstutter visible during the run. For both the low end cards, the 7790 and 660 Ti, the full quality textures did not seem to load properly.

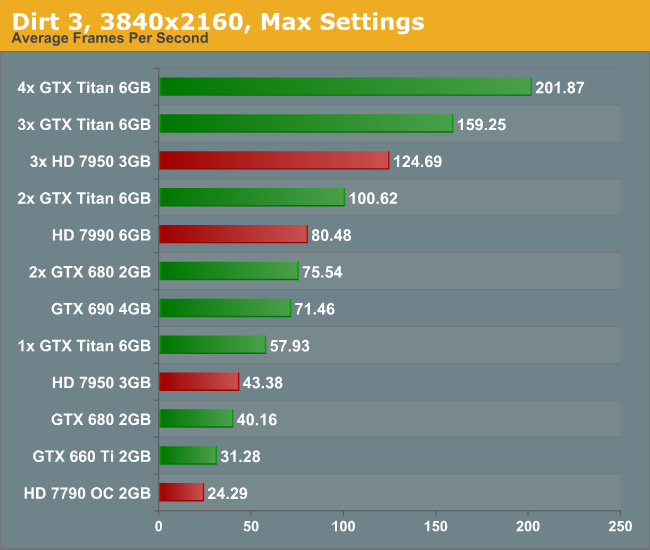

Dirt 3, Max Settings, 3840x2160:

Dirt is a title that loves MHz and GPU power, and due to the engine is quite happy to run around 60 FPS on a single Titan. Understandably this means that for almost every other card you need at least two GPUs to hit this number, more so if you have the opportunity to run 4K in 3D.

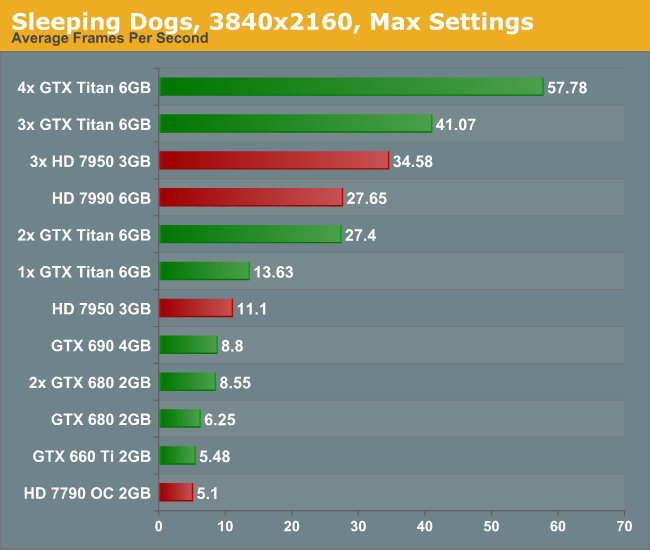

Sleeping Dogs, Max Settings, 3840x2160:

Similarly to Metro, Sleeping Dogs (with full SSAA) can bring graphics cards down to their knees. Interestingly during the benchmark some of the scenes that ran well were counterbalanced by the indoor manor scene which could run slower than 2 FPS on the more mid-range cards. In order to feel a full 60 FPS average with max SSAA, we are looking at a quad-SLI setup with GTX Titans.

Conclusion:

First of all, the minute you experience 4K with appropriate content it is worth a long double take. With a native 4K screen and a decent frame rate, it looks stunning. Although you have to sit further back to take it all in, it is fun to get up close and see just how good the image can be. The only downside with my testing (apart from some of the low frame rates) is when the realisation that you are at 30 Hz kicks in. The visual tearing of Dirt3 during high speed parts was hard to miss.

But the newer the game, and the more elaborate you wish to be with the advanced settings, then 4K is going to require horsepower and plenty of it. Once 4K monitors hit a nice price point for 60 Hz panels (sub $1500), the gamers that like to splash out on their graphics cards will start jumping on the 4K screens. I mention 60 Hz because the 30 Hz panel we were able to test on looked fairly poor in the high FPS Dirt3 scenarios, with clear tearing on the ground as the car raced through the scene. Currently users in North America can get the Seiki 50” 4K30 monitor for around $1500, and they recently announced a 39” 4K30 monitor for around $700. ASUS are releasing their 4K60 31.5” monitor later this year for around $3800 which might bring about the start of the resolution revolution, at least for the high-end prosumer space.

All I want to predict at this point is that driving screen resolutions up will have to cause a sharp increase in graphics card performance, as well as multi-card driver compatibility. No matter the resolution, enthusiasts will want to run their games with all the eye candy, even if it takes three or four GTX Titans to get there. For the rest of us right now on our one or two mid-to-high end GPUs, we might have to wait 2-3 years for the prices of the monitors to come down and the power of mid-range GPUs to go up. These are exciting times, and we have not even touched what might happen in multiplayer. The next question is the console placement – gaming at 4K would be severely restrictive when using the equivalent of a single 7850 on a Jaguar core, even if it does have a high memory bandwidth. Roll on Playstation 5 and Xbox Two (Four?), when 4K TVs in the home might actually be a thing by 2023.

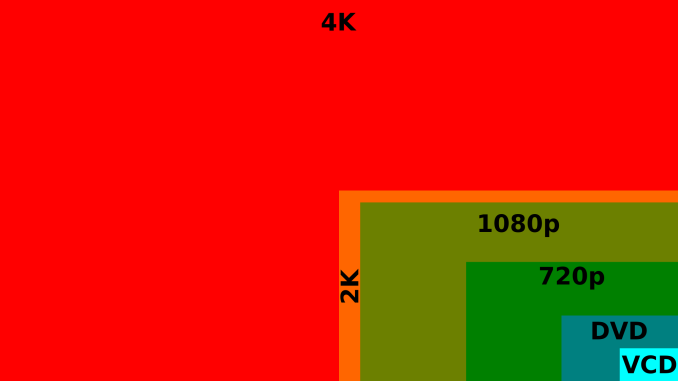

16:9 4K Comparison image from Wikipedia

134 Comments

View All Comments

EzioAs - Monday, July 1, 2013 - link

Since mid-range cards aren't really that strong yet for 2560x1440(/1600), I'd say 2-3 years waiting for it to be strong enough for 4K is wishful thinking and at the same time, games will be even more demanding. Still, very nice to see the numbers though.Thanks Ian.

B3an - Tuesday, July 2, 2013 - link

Yeah theres no way even dual mid-range cards with handle games at 4k in just 2 - 3 years.I'm also expecting quite a graphics jump and especially a big VRAM usage jump because of the new consoles and the good amount of memory they have. We're going to start seeing very high res textures. So mid-range cards will also start needing 6GB+ for PC game, especially @ 4k res in 2 - 3 years time.

MordeaniisChaos - Sunday, July 7, 2013 - link

Next year, video cards will double in density. 3 years from now, that will be doubled. In other words, the systems in 3 years will be hugely powerful to what we have now, much less the nearly year old Titan, which again, you are comparing to a new video card which will be twice as dense as a video card that is twice as dense as the Titan, approximately. Think of what card was released 4 years before the 680, and look at how they stack up performance wide.Also keep in mind that VRAM is a big part of all of this, and all signs point to the next generation of cards having more VRAM standard.

turbosloth - Wednesday, July 24, 2013 - link

Um, the GTX Titan isnt anywhere near a year old. I dont think that this meteoric rise in GPU power will happen quite as fast as you're expecting/hoping for, especially in reasonably priced consumer products.Jergos - Saturday, November 23, 2013 - link

MordeaniisChaos is citing Moores Law http://en.wikipedia.org/wiki/Moore's_law. His post is entirely accurate.The main problem with the future of Moore's Law is the actual physical boundaries. Eventually we will get to the atomic size limit of transistors which means that some serious innovation will have to occur to pass tip toe around that boundary. We don't know when we'll hit that limit but it'll happen. Until then MordeaniisChaos will be correct.

HSR47 - Saturday, January 25, 2014 - link

That's an entirely inaccurate reading of Moore's law:Moore's law relates to the increase in transistor count, and transistor count does NOT track 1:1 with overall performance.

zerockslol - Thursday, May 1, 2014 - link

The first titan was released Feb 2013, it's now may 2014 which is more than a year afterThatguy97 - Sunday, December 18, 2016 - link

Lolairmantharp - Tuesday, July 2, 2013 - link

I'd think that mid-range cards would actually do a lot better at 4k if they simply all had their VRAM doubled- especially the Nvidia cards with 2GB/GPU. An 8GB GTX690 should be pretty potent at 4k if settings are balanced out a little.Note that I appreciate Ian pushing the sliders all the way to the right, to set a good performance baseline for 4k. I'm sure they'll have much more reasonable reviews when 4k60 panels in desktop form factors start dipping into the enthusiast friendly <$1000 bracket in the next year or so.,

ehpexs - Wednesday, July 3, 2013 - link

Even a decent card like a 7950 has trouble at high reses. I run triple 2560x1440 27s. For one display it's fine, but for three, we're talking medium settings in Crysis 3 and BF3 with the cards being badly vram capped. That was one thing I felt was missing in this review, vram usage.