VESA Rolls Out DisplayHDR 1.2 Spec: Adding Color Accuracy, Black Crush, & Wide-Color Gamuts For All

by Ryan Smith on May 7, 2024 12:00 PM EST

VESA this morning is taking the wraps off of the next iteration of its DisplayHDR monitor certification standard, DisplayHDR 1.2. Designed to raise the bar on display quality, the updated DisplayHDR conformance test suite imposes new luminance, color gamut, and color accuracy requirements that extend across the entire spectrum of DisplayHDR tiers – including the entry-level DisplayHDR 400 tier. With vendors able to begin certifying displays for the new standard immediately, the display technology group is aiming to address the advancements in the display technology market over the last several years, while enticing display manufacturers to make use of them to deliver better desktop and laptop displays than before.

Altogether, the DisplayHDR 1.2 is easily the biggest update to the standard since it launched in 2017, and in many respects the first significant overhaul to the standard since that time as well. DisplayHDR 1.2 doesn’t add any new tiers to the standard (e.g. 1400), instead it’s all about increasing and/or tightening the specifications at each of its tier levels. In short, the VESA is raising the bar for displays to reach DisplayHDR compliance, requiring a higher level of performance and testing for more corner cases that trip up lesser displays.

All of these changes are coming, in turn, after over half a decade of technology improvements in the display space. Whereas even the original DisplayHDR 400 requirements represented a modestly premium display in 2017, nowadays even sub-$200 displays can hit those relatively loose requirements as panels and backlighting solutions have improved. And even at the high-end of things, full array local dimming (FALD) displays have gone from hundreds of zones to thousands. All of which has finally pushed VESA’s member companies into allowing higher standards going forward.

DisplayHDR 400: 8-bit w/FRC & DCI-P3 Panels Now Required

The updated specifications touch every tier of the DisplayHDR standard. However the biggest changes are going to be felt at DisplayHDR 400, the entry-level tier of the standard. Essentially a compromise between what mainstream, mass-market display technology is capable of while introducing some basic requirements/boundaries for displaying HDR content, DisplayHDR 400 in particular has stuck out as a generation behind the other standards since its introduction. And while it will always remain the lowest tier of the specification, DisplayHDR 1.2 is getting rid of a lot of the unique allowances at this tier, bringing it closer to its higher-quality siblings.

The biggest change here is that while DisplayHDR maintains the 8-bit panel requirement of its predecessor, the underlying driver IC now needs to support 10-bit output via frame rate control (FRC). Otherwise known as temporal dithering, 2-bit FRC is how many displays on the market display 10-bit content today, combing an 8-bit panel with dithering to interpolate the missing in-between colors. Previously, DisplayHDR 400 was the only tier not to mandate FRC support. Now all tiers, from 400 to 1400, will require at minimum an 8-bit panel with simulated 10-bit (8b+2b) output via FRC.

| VESA DisplayHDR Requirements (Summary) | |||||||

| 400 v1.1 | 400 v.1.2 | 500 v1.2 | 600 v1.2 | 1000 v1.2 | |||

| Min Luminance (10% APL, nits) |

400 | 400 | 500 | 600 | 1000 | ||

| Max Luminance, Black (nits) | 0.4 | 0.4 | 0.1 | 0.1 | 0.05 | ||

| Static Contrast Ratio | N/A | 1300:1 | 7000:1 | 8000:1 | 30000:1 | ||

| sRGB Gamut Coverage | 95% | 99% | |||||

| DCI-P3 Gamut Coverage | N/A | 90% | 95% | ||||

| Panel Bit Depth | 8-bit | 8-bit | |||||

| Display IC Bit Depth | 8-bit | 8-bit + 2-bit FRC | |||||

| HDR Color Accuracy | N/A | Delta-TP < 8 | Delta-TP < 6 | ||||

| Expected Backlighting | Global | Global | 1D/1.5D Zone Edge-Lit | 2D FALD | |||

To be sure, even DisplayHDR 400 displays were already required to be able to ingest a 10-bit per channel input; but at minimum spec, they weren’t really doing much with that higher precision information up until now.

With the larger number of colors supported via the greater bit depth at the DisplayHDR 400 tier, DCI color gamut support is also being mandated for the first time. Whereas the old standard were targeted squarely at the sRGB color space, with a 95% gamut requirement, DisplayHDR 1.2 raises this to 90% of the larger DCI-P3-D65 gamut (while sRGB moves to 99%). This not-so-coincidentally happens to be the same gamut requirements for the 500/600/1000 tiers under DisplayHDR 1.1, meaning that tomorrow's basic DisplayHDR 400 displays will have to meet the same gamut requirements as today’s better displays.

All told, this raises the bar on DisplayHDR significantly. Now the minimum specifications for the standard are largely aligned with the HDR10 video standard itself – 10-bit color with a wide color gamut. So whereas the old 400 tier represented a solid sRGB display with above-average contrast options, DisplayHDR 400 now starts with 10-bit (8bit+FRC) displays capable of displaying a reasonably wide color gamut.

Meanwhile, raising the bar doesn’t just apply to the 400 tier. DisplayHDR 500+ displays are seeing their own color gamut requirements tightened up; going forward, they’ll need to hit 95% or better of the DCI-P3 color gamut in order to qualify. That gamut width was previously only required for the top-end 1400 tier, so we’re seeing some DisplayHDR 1400 requirements trickle down to 500+, while 400 has become a true wide color gamut standard.

Updated Tests: More Precise White Points, Forcing Local Dimming

Besides improving the basic color qualities of DisplayHDR certified displays, the DisplayHDR 1.2 specification is also updating some of its existing tests to tighten standards or otherwise catch displays that were able to pass the major tests while obviously failing in other corner cases.

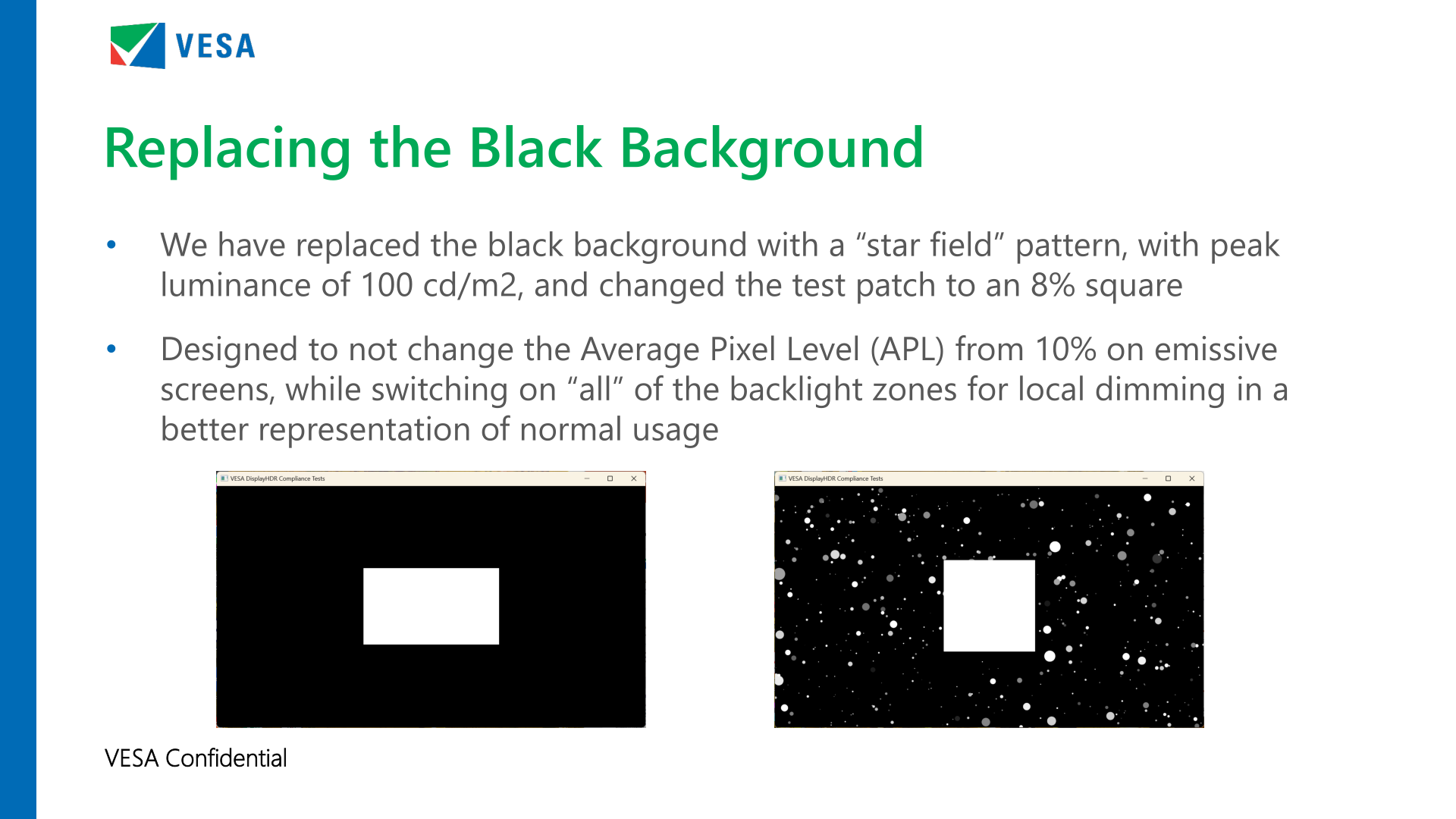

The big thing here is that DisplayHDR 1.2 is radically replacing the black background in many of its tests with what the organization is calling a “star field” pattern. The idea being that the traditional white-square-on-black-background testing methodology wasn’t very representative of how actual HDR content looked (it gets dark, it rarely gets 0.00 pitch black), and in turn allowed displays to min-max this test by simply turning off large portions of their backlighting.

Going forward, the 10% APL test will use the new star field pattern, which is based around an nits 8% APL square, and another 2% of APL brightness provided by grey stars of various brightness in other areas of the picture. The net result is still a total APL of 10%, but now displays need to be able to meet their brightness and contrast ratio requirements while having all (or virtually all) of their backlight zones active. The stars themselves will be at no higher than 100 nits in brightness, while the center square will continue to be at the display’s maximum HDR luminance level.

It goes without saying that these changes are chiefly engineered to stress backlit displays, i.e. LCDs. Emissive displays, such as OLED displays, should have little trouble with this test since the APL isn’t changing and they can already do per-pixel lighting.

White point and luminance accuracy is the other major test update for the newest DisplayHDR test suite. DisplayHDR 1.2 is now testing displays over a much larger range of brightness levels, starting lower and going higher. Specifically, whereas the previous version of the test started at 5 nits and stopped at 50% of the display’s maximum brightness, the 1.2 test starts at 1 nit and goes up to almost 100% of the display’s brightness. Which, as VESA puts it, is a 10x increase in the luminance range tested.

The updated test isn’t just testing a larger range, either. The accuracy requirements at each point within the test are also tighter at all but the lowest luminance levels. The Delta-ITP tolerances (VESA’s luminance-variable metric for measuring errors) have been cut by upwards of a third, bringing the error limits down from 15 to 10 (note that the error limits vary with the brightness). Meanwhile the error limits for 1 nit to 15 nits will remain a Delta-ITP of 20.

The combination of these two changes means that the brightness levels and their corresponding white points on new displays will need to be far more accurate – and consistently so from top to bottom – than what 1.0/1.1 displays could hit. Now only the darkest levels, below 1 nit, are not being tested.

New Tests Galore: HDR Color Accuracy, HDR vs. SDR Black Levels, & More

Last, but certainly not least, the DisplayHDR 1.2 specification is also introducing several new tests that displays will need to meet in order to achieve compliance. Most of these are brightness and contrast ratio related (the HDR in, well, HDR). But there is also a new test to improve color accuracy as well.

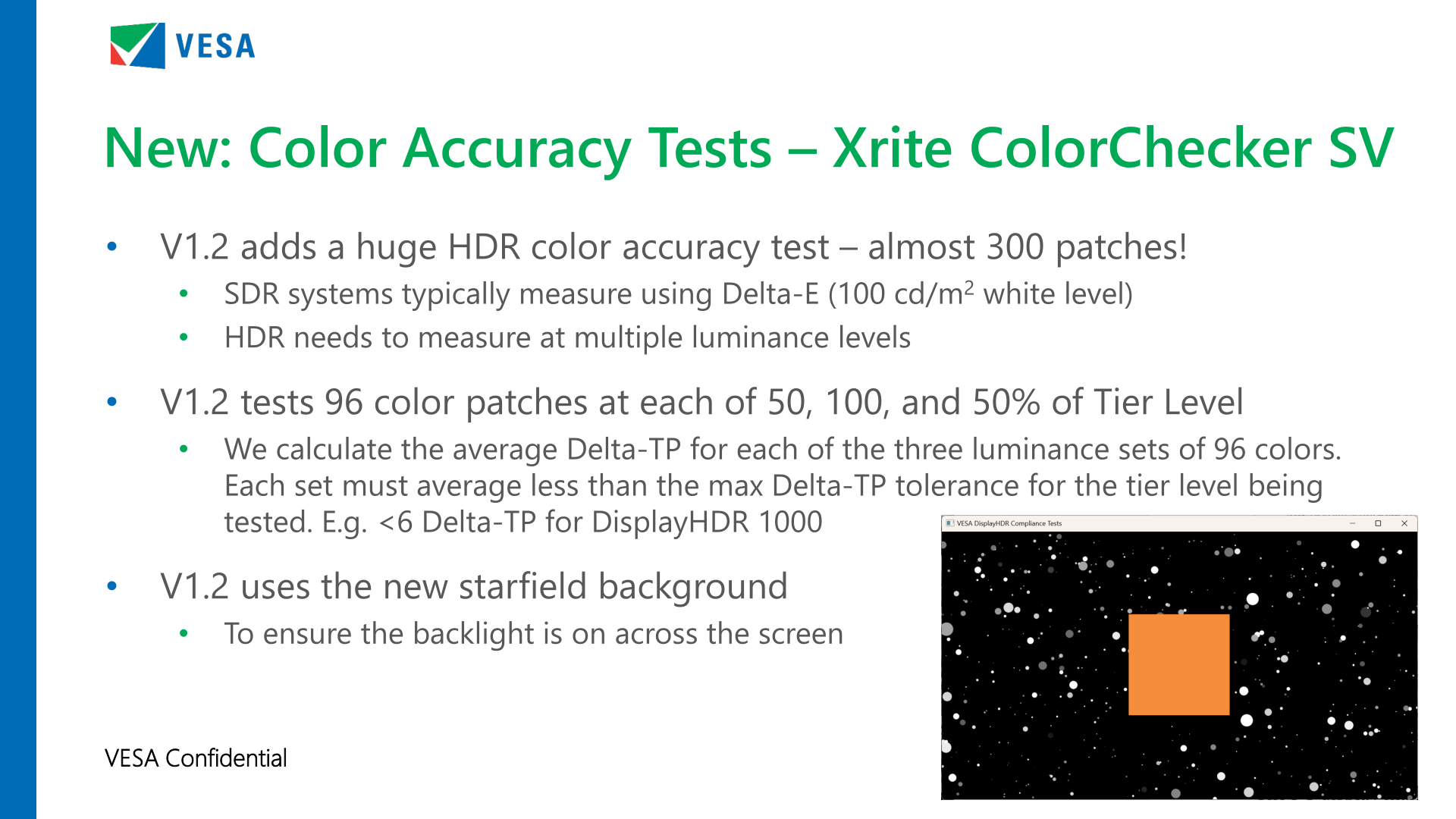

And it’s color accuracy where we’ll start. The new DisplayHDR standard incorporates a significant HDR color accuracy test, which is based on tests developed by X-Rite for their ColorChecker SV suite. Like the basic luminance test, this involves testing a small square patch, but this time for color accuracy rather than brightness.

Altogether, the DisplayHDR 1.2 HDR color accuracy test relies on 96 color patches, and each in turn is measured at 50 nits, 100 nits (the typical standard for SDR calibration), and then 50% of the display’s maximum rated brightness (i.e. the tier level). Which makes for just under 300 patches tested overall. And all of this is tested using the new star field pattern, in order to ensure that LCDs have their backlights turned on.

The actual metric being used is a variant on VESA’s Delta-ITP method, which they call Delta-TP – the change being that it removes the luminance component in the calculation since this is a color accuracy test. The actual error margins depend on which DisplayHDR tier a display is being qualified for; Delta-TP of 8 for DisplayHDR 400/500/600, and a Delta-TP of 6 for DisplayHDR 1000/1400. Do note however that this is an average score for reach luminance level, not a hard limit – so individual patches can exceed the limit while a display still passes the test overall.

Consequently, this HDR color accuracy test isn’t going to be a complete replacement for traditional factory display calibration and testing, as is common on today’s professional-focused monitors. But even this level of color testing means that display manufacturers are expected to need to implement batch-calibration for their lower-tier DisplayHDR displays. And the Delta-TP 6 target for 1000+ displays will generally require independent, per-display calibration.

Meanwhile, addressing some corner cases in terms of brightness and contrast ratios, there are a few tests to talk about. The most significant of these from an end-user perspective overall is arguably the HDR vs. SDR black level test, which as the name says on the tin, tests a display’s black levels in both SDR and HDR modes.

This test is intended to combat SDR applications showing up with “hot” blacks in HDR mode. Ideally, the black level for SDR applications should be identical regardless of the mode the display/OS is in. But in practice, this is not always the case today. As a result, always-on HDR is not always useful today, as some displays are compromising SDR content in the process.

The test itself is relatively straightforward, using a 200 nit white patch next to a black patch, and measuring the contrast ratios between them. The ratios should be roughly the same in both a display’s SDR and HDR modes.

DisplayHDR 1.2 is also introducing a new static contrast ratio test, which we’re told the working group for the DisplayHDR standard considers one of their bigger wins. This test supplants the previous dynamic range test, which measured a single spot with two different images. Instead, the static test measures a single image at two (or more) locations, requiring a display to show mastery of complex images with multiple contrast ratio needs.

This new test, in turn, essentially comes in two different versions, depending on the DisplayHDR tier being tested, and the backlighting technology that is meant to underlie that tier. The low-pattern test, which is designed for panels without local dimming (400) or with 1D/1.5D edge-lit dimming (500/600), uses a variation on the square/star field pattern. Meanwhile the high-pattern test is intended to break/fail displays that don’t implement 2D dimming (FALD) by using a simpler pattern, but one that places a white border around the edges of the image. The end result being that only a FALD can display all parts of the test image correctly.

Each DisplayHDR tier, in turn, has a different contrast ratio requirement. This starts as low as 1300:1 for the 400 tier, while the 1000 tier requires 30,000:1, and 50,000:1 for the top DisplayHDR 1400 tier.

And on the dimmer end of things, DisplayHDR 1.2 is adding a black crush test. Which is another “does what it says on the tin” kind of test, making sure that displays aren’t crushing low brightness details (e.g. shadows) and rendering them as pure black. This is a problem with some current displays, which can crush dim content in order to make their blacks look inky.

Notably, this isn’t an accuracy test, but rather it’s simpler than that, just making sure that various very-low luminance levels are distinguishable from each other. The test measures pure black (0 nits), as well as 0.05, 0.1, 0.3, and 0.5 nits. The measured luminance level at each step must be distinct from the last, and that it must be increasing, thus ensuring that shadow details are not getting wiped out at these low luminance levels.

Finally, in addressing another edge case scenario, DisplayHDR 1.2 is introducing a subtitle flicker test. Subtitles represent something of a difficult workload for backlit displays, particularly for anything not using FALD, as the text needs to be a (relatively) consistent brightness while the brightness of the rest of the image will vary from frame to frame. Poor displays can’t modulate their brightness well enough to keep the two separate, which manifests itself as image flickering.

This test is a variant of the 10% APL test, using a 10 nits grey patch on top of an equally grey star field. Meanwhile 100 nits subtitles are displayed at the bottom of the frame, with the grey patch being measured with both the subtitles enabled and disabled. DisplayHDR 400/500/600 allow for a 13% variance in patch brightness, while DisplayHDR 1000/1400 (and all the OLED True Black tiers) allow a 10% variance.

Conformance Testing Starting Today, DisplayHDR 1.1 To Be Retired May 2025

Wrapping things up, with today’s announcement of DisplayHDR 1.2, companies can immediately begin certifying displays for the new standard if they’d like. And VESA is hoping to have some certified products to show off for the 2024 Display Week expo next week.

Otherwise, for products that were already in development for the 1.1 specification, VESA is once again going to have a transition period for the new standard. New displays can be certified for DisplayHDR 1.1 up until May of 2025, and meanwhile the window for certified laptops will be a bit longer, with a May 2026 cut-off.

As always, it bears mentioning DisplayHDR testing remains optional for display manufacturers. But for those wishing to promote a product as “premium” by using the DisplayHDR branding – which is the case for virtually all of them – the new DisplayHDR standards represent a significant increase in display requirements, especially at the DisplayHDR 400 tier. With 10bit (8bit+FRC) and wide color gamut support now required across the entire DisplayHDR spec, we’re quickly approaching the point where, if not capable of super high contrast ratios, at least the bulk of the display market is (finally) going to be capable of displaying wide color gamuts and at a useful precision.

9 Comments

View All Comments

crimsonson - Tuesday, May 7, 2024 - link

"Delta-TP < 8"Buwahahhahahaha. That is a high Delta. WTF.

bubblyboo - Tuesday, May 7, 2024 - link

Some improvements at least, even if minor. The original DisplayHDR spec was crazy easy to cheat with even 6 edge lit zones due to the garbage white box/black background test, when even most reviewers moved to checkerboard patterns long ago. The most recent edge lit DisplayHDR 1000 monitor I could find was the LG 32GQ950 from mid 2022, which retailed for $1300 and only had 2x16 edge lit zones, which means the dimming is basically useless in 99% of content due to blooming.Dogers - Tuesday, May 7, 2024 - link

And how do we know if a "HDRx00" display is meeting 1.0, 1.1 or 1.2 levels? Just the classic case of "if it doesn't say, assume it's the worst one"?Ryan Smith - Tuesday, May 7, 2024 - link

You can always check the VESA database if you want the full details. But 1.0 certification ended in 2020, and 1.1 certification will end in 2025. Meanwhile, monitor manufacturers don't typically keep a given model around for more than a few years.Threska - Tuesday, May 7, 2024 - link

Maybe someone will check that stuff.https://youtu.be/-bEKOp1GLDs

wr3zzz - Tuesday, May 7, 2024 - link

No change to OLED certifications? Albeit it seems OLED VESA certification is kinda useless since the only differences is minimum peak luminance which AFAIK even TrueBlack600 is already left behind by 2023 LG and Samsung panels.Ryan Smith - Tuesday, May 7, 2024 - link

The luminance and contrast ratio rules haven't changed. But True Black displays still must clear all of the new tests for color accuracy and white point accuracy, as well as things like black crush.boredsysadmin - Thursday, May 9, 2024 - link

Mandatory XKCD on Standards: https://xkcd.com/927/haukionkannel - Friday, May 10, 2024 - link

Well... it is an improvement.I would like a test where also the stars would be measured for peak brightness, or that there would be stars with 100 nit, 200 nit, 300 nit... 10000 nit

So that we would really see what the screen is capable.