The Supermicro H11DSi Motherboard Mini-Review: The Sole Dual EPYC Solution

by Dr. Ian Cutress on May 13, 2020 8:00 AM EST- Posted in

- Motherboards

- AMD

- Supermicro

- Naples

- EPYC

- 10GbE

- Rome

- H11DSi

Users looking to build their own dual EPYC workstation or system, using completely off-the-shelf components, do not have a lot of options. Users can buy most of the CPUs at retail or at OEM, as well as memory, a chassis, power supplies, coolers, add-in cards. But the one item where there isn’t a lot of competition for these sorts of builds is in the motherboard. Unless you go down the route of buying a server on rails with a motherboard already fitted, there are very limited dual EPYC motherboard options for users to just purchase. So few in fact, that there are only two, both from Supermicro, and both are called the H11DSi. One variant has gigabit Ethernet, the other has 10GBase-T.

Looking For a Forest, Only Seeing a Tree

Non-proprietary motherboard options for building a single socket EPYC are fairly numerate – there’s the Supermicro H11DSL, the ASRock EPYCD8-2T (read our review here), the GIGABYTE MZ31-AR0 (read our review here), or an ASUS KNPA-U16, all varying in feature set and starting from $380. For the dual socket space however, there is only one option. The Supermicro H11DSi, H11DSi-NT, and other potential minor variants, can be found at standard retailers from around $560-$670 and up, depending on source and additional features. All other solutions that we found were part of a pre-built server or system, often using non-standard form factors due to the requests of the customer those systems were built for. In being the only ‘consumer’ focused motherboard, the H11DSi has a lot to live up to.

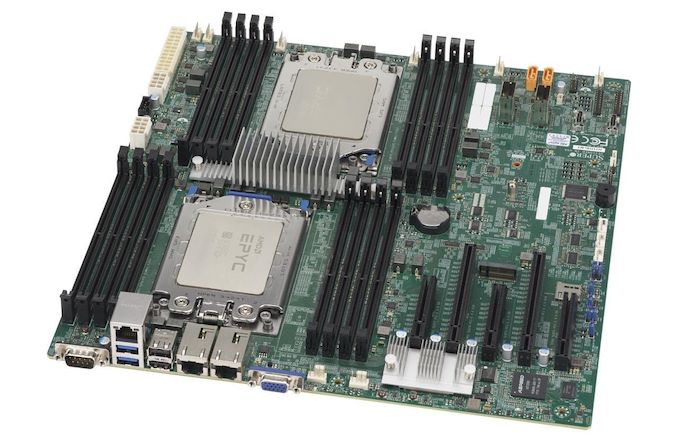

As with other EPYC boards in this space, users have to know which revision of the board they are getting – it’s the second revision of the board that supports both Rome and Naples processors. One of the early issues with the single socket models was that some of them were not capable of Rome support, even with an updated BIOS. It should be noted that as the H11DSi was built with Naples in mind to begin with, we are limited to PCIe 3.0 here, and not the PCIe 4.0 that Rome supports. As a result, we suspect that this motherboard might be more suited to users looking to extract the compute out of the Rome platform rather than expanded PCIe functionality. Unfortunately this means that there are no commercial dual socket EPYC motherboards with PCIe 4.0 support at the time of writing.

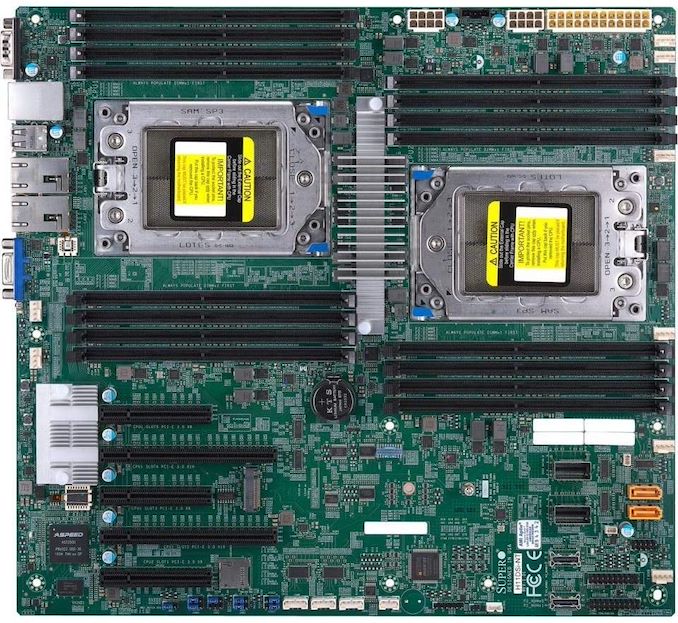

The H11DSI is partly E-ATX standard and part SSI-CEB, and so suitable cases should support both in order to get the required mounting holes. Using the dual socket orientation that it has, the board is a lot longer than what most regular PC users are used to: physically it is one square foot. The board as shown supports all 8 memory channels per socket in a 1 DIMM per channel configuration, with up to DDR4-3200 for the Revision 2 models. We successfully placed 2 TB of LRDIMMs (16 * 128 GB) in the system without issues.

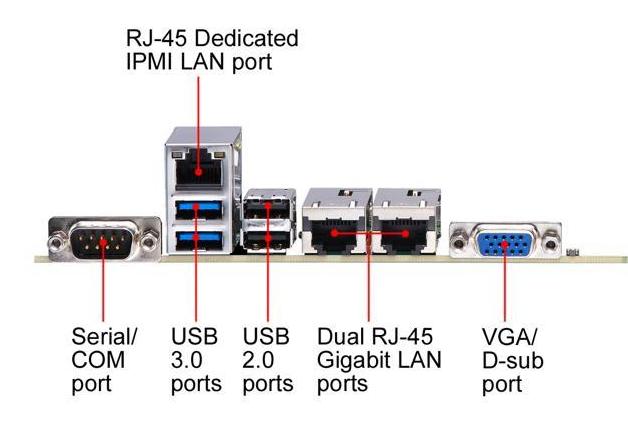

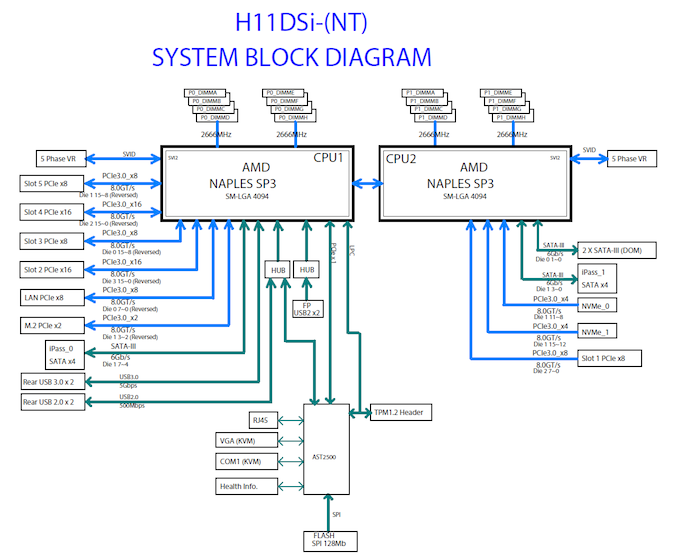

As with almost all server motherboards, there is a baseband management controller in play here – the IPMI ASPEED AST2500 which has become a standard in recent years. This allows for a log in to a Supermicro interface over the dedicated Ethernet connection, as well as a 2D video output. We’ll cover the interface on the next page.

Ethernet connectivity depends on the variant on the H11DSi you look for: the base model has two gigabit ports powered through an Intel i350-AM21 controller, while the H11DSi-NT has two 10GBase-T ports from the Intel X550-AT2 on board. Due to this controller having a higher TDP than the gigabit controller, there is an additional heatsink next to the PCIe slots.

The board has a total of 10 SATA ports: two SATA-DOM ports, and four SATA ports from each CPU through two Mini-SAS connectors. It’s worth noting that the four ports here come from different CPUs, such that any software RAID across the CPUs is going to have a performance penalty. In a similar vein, the PCIe slots also come from different CPUs: the top slot is a PCIe 3.0 x8 from CPU 2, whereas the other slots (PCIe 3.0 x16/x8/x16/x8) all come from CPU 1. This means that CPU 2 doesn’t actually use many of the PCIe lanes that the processor has.

Also on the storage front is an M.2 x2 slot, which supports PCIe and SATA for Naples, but only PCIe for Rome. The power cabling is all in the top right of the motherboard, for the 24-pin main motherboard power as well as the two 12V 8-pin connectors, one each for the CPUs. Each socket is backed by a 5-phase server-grade VRM, and the motherboard has eight 4-pin fan headers for lots of cooling. The VRM is unified under a central heatsink, designed to take advantage of cross-board airflow, which will be a critical part in any system built with this board.

We tested the motherboard with both EPYC 7642 (Rome, 48 core) processors and the latest EPYC 7F52 (Rome, 16 core high frequency) processors without issue.

36 Comments

View All Comments

bryanlarsen - Wednesday, May 13, 2020 - link

> the second CPU is underutilized.This is common in server boards. It means that if you don't populate the second CPU, most of your peripherals and slots are still usable.

The_Assimilator - Wednesday, May 13, 2020 - link

It's almost like technology doesn't exist for the board to detect when a second CPU is present, and if so, switch some of the PCIe slots to use the lanes from that CPU instead. Since Supermicro apparently doesn't have access to this holy grail, they could have opted for a less advanced piece of manual technology known as "jumpers" and/or "DIP switches".This incredible lack of basic functionality on SM's part, coupled with the lack of PCIe 4, makes this board DOA. Yeah, it's the only option if you want dual-socket EPYC, but it's not a good option by any stretch.

jeremyshaw - Wednesday, May 13, 2020 - link

For Epyc, the only gain of dual socket is more CPU threads/cores. If you wanted 128 PCIe 4.0 lanes, single socket Epyc can already deliver that.Samus - Thursday, May 14, 2020 - link

The complexity of using jumpers to reallocate entire PCIe lanes would be insane. You'd probably need a bridge chip to negotiate the transition, which would remove the need for jumpers anyway since it could be digitally enabled. But this would add latency - even if it wasn't in use since all lanes would need to be routed through it. Gone are the days of busmastering as everything is so complex now through serialization.bryanlarsen - Friday, May 15, 2020 - link

Jumpers and DIP switches turn into giant antennas at the 1GHz signalling rate of PCIe3.kingpotnoodle - Monday, May 18, 2020 - link

Have you got an example of a motherboard that implements your idea with PCIe? I've never seen it and as bryanlarsen said this type of layout where everything essential is connected to the 1st CPU is very standard in server and workstation boards. It allows the board to boot with just one CPU, adding the second CPU enables additional PCIe sockets usually.mariush - Wednesday, May 27, 2020 - link

At the very least they could have placed a bunch of M.2 connectors on the motherboard, even double stacked... or make a custom (dense) connector that would allow you to connect a pci-e x16 riser cable to a 4 x m.2 card.johnwick - Monday, June 8, 2020 - link

you have to share with us lots of informative point here. I am totally agree with you what you said. I hope people will read this article.http://www.bestvpshostings.com/

Pyxar - Wednesday, December 23, 2020 - link

This would not be the first time i've seen that. I remember playing with the first gen opterons, the nightmares of the pro-sumer motherboard design shortcomings were numerous.Sivar - Wednesday, May 13, 2020 - link

This is a great article from Ian as always. Quick correction though, second paragraph:"are fairly numerate". Not really numerate means.