Nixeus VUE 30: 30" 2560x1600 IPS Monitor Review

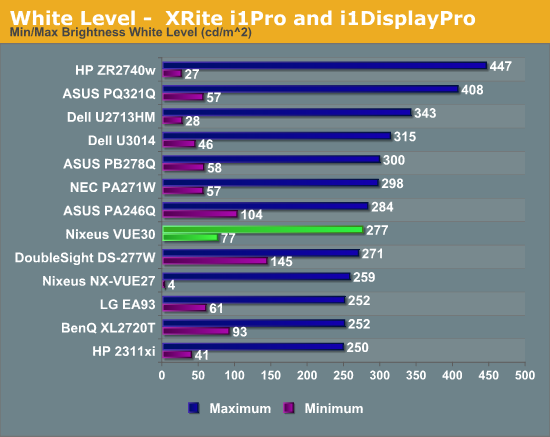

by Chris Heinonen on August 20, 2013 6:00 AM ESTIt seems that the larger the panel on a display I review, the brighter the display can get. I always expect the opposite, as lighting more screen would take more and more power. So far, that has not been the case. The VUE 30 is plenty bright, but not as blindingly bright as many other large displays. When I crank the brightness to maximum I measure 277 cd/m2 of brightness on a pure white screen. Moving the brightness to minimum drops this down to 77 cd/m2, which is below the 80 cd/m2 I like the minimum to fall under. This should provide plenty of range for most users.

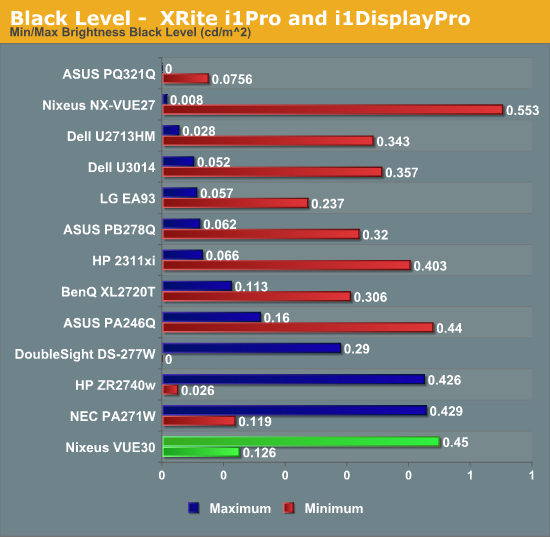

With a black screen, we see a black level of 0.126 cd/m2 with the backlight at the minimum level. With the backlight to maximum this jumps up to 0.45 cd/m2. This level is very much in line with other computer monitors. I won’t fault Nixeus for this, but I’m always surprised at the level of black that is accepted with PC monitors that isn’t acceptable with TVs. Modern plasma displays can produce black levels of 0.006 cd/m2 under the same test conditions, and modern LCDs can hit 0.05 cd/m2 as well. I understand why plasma isn’t used for a PC display, but I’d like to see all vendors work on their black levels going forward. Basically, this panel seems similar to the 30" IPS displays we tested over five years ago; it's just half the price now.

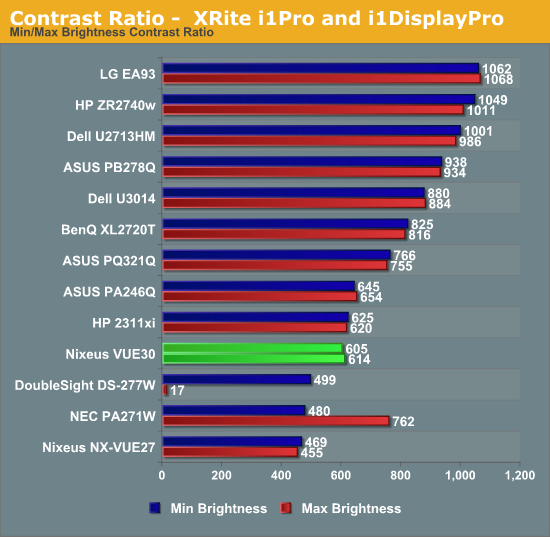

These numbers provide us with a contrast ratio of just 610:1 on average. This falls well behind the Dell U3014 and ASUS PQ321Q displays, which are the most recently reviewed 30”+ displays I have data for. Those both cost a lot more, but being close to 600:1 is a disappointment to me.

With those basic measures out of the way, it was time to see how accurate the VUE 30 is.

95 Comments

View All Comments

DanNeely - Wednesday, August 21, 2013 - link

Read before you comment. This was answered above; there's no off the shelf hardware to do so at 2560.Sivar - Tuesday, August 20, 2013 - link

"Viewable Size 20""Typo -- please fix.

abhaxus - Tuesday, August 20, 2013 - link

it's time to end this farce and stop posting input lag numbers that are not at native resolution. I've bought two monitors in the last 8 months (a 23" eIPS Asus and a 27" Qnix QX2710 from Korea) and got NO help from these Anandtech reviews, due to the ridiculous notion that input lag at 1080p is somehow comparable to what it would be with no scaling. Either don't put the number up there, or do the tests at native res.JarredWalton - Tuesday, August 20, 2013 - link

Unless the scaler totally deactivates and thus doesn't contribute to lag, running native won't be any less laggy. For most displays, the presence of a scaler is an all or nothing thing. The old Dell 3008WFP had much worse lag than the 3007WFP because it had multiple inputs and a scaler. Unless something has changed, I wouldn't expect native resolution to be less laggy.As I noted above, however, the problem is in testing for input lag at native. We used to compare to a CRT, which meant we were limited to CRT resolutions. Now Chris is using the Leo Bodnar lag tester...which has a max resolution support of 1080p. Until someone makes one capable of testing native 4K and WQXGA, Chris doesn't have a way to test input lag at native on these displays.

cheinonen - Tuesday, August 20, 2013 - link

Adding to what Jared said, testing on displays that offer both 1:1 mode and a scaling stretch mode, I typically see only 1-2ms of delay difference between them.Most monitors are using cheap, fast scalers that doesn't add that much lag. Things like color management and other features, which you'll see in more displays now, add far more lag because that is more intensive work to do.Believe me, if someone makes a lag tester that does more than 1080p I'm buying it. Otherwise buying a scope for a single measurement is just cost prohibitive.

HisDivineOrder - Tuesday, August 20, 2013 - link

Seems inevitable that the 2560x1600 will remain mostly niche with 2560x1440 becoming the go-to resolution in the post-4K world that we'll be soon living in. Monitor makers will be selling these 1440p displays hand over fist when people become convinced they want a high resolution display but find the pricetag on 4K to be out of this world and they come back down to Earth, still wanting a higher resolution display than 720p/768p/1080p.I doubt they'll make 1600p the go-to resolution, so they'll split the difference and go with 1440p to maximize profits (the exact reason they went to 1080p instead of 1200p).

Sabresiberian - Tuesday, August 20, 2013 - link

Frankly, I think about sRGB the same way I think about TN and 16:9 - they are low-quality standards that I would like to see fade away from mainstream monitors. While I agree that any monitor aimed at said mainstream should be sRGB capable, I can't help but think it is really time for the standard to be raised. It is possible to give us full AdobeRGB without breaking the bank - as is proven here.This isn't an LCD thing, of course, sRGB pervades the industry all along the path of software and hardware. But, not many people are demanding higher quality color reproduction, so when is it going to change, if ever?

Well, I'll say it - sRGB is a low-quality standard, and it is time we moved on.

JarredWalton - Tuesday, August 20, 2013 - link

You're right, but of course 99% of laptops can't even do sRGB let alone AdobeRGB or NTSC, and laptops are now outselling desktops. I've been using a high gamut HP LP3065 for years, though, and while I notice the oversaturation at times, when I'm working with many imaging programs (Photoshop, even most browsers now, and MS Photo Viewer) appear to recognize AdobeRGB properly.SeannyB - Tuesday, August 20, 2013 - link

I hope some day we'll simply have color management on all OSes (namely Windows and Android), and not just OSX. I'm living with a calibrated and profiled extended gamut 1600p monitor in Windows 7, and it's tough. Windows 7 doesn't assume/remap its shell to sRGB, or any other apps. Only certain software like Adobe's, and a few others with effort (Irfanview, Firefox, Media Player Classic Home Cinema) are "color aware". Google Chrome remaps correctly when viewing JPEGs with colorspace tags, but everything else in that browser is oversaturated. (It doesn't assume sRGB from untagged images and web colors.)I think a future of ubiquitous color management will have to happen in a world of ubiquitous OLED displays. That's a future that continuously seems over the horizon.

ZeDestructor - Tuesday, August 20, 2013 - link

There are preferences in FF that sets default colourspace to sRGB (I used it on and off, depending on my mood), so only correctly tagged pictures are rendered with wide gamut.For the windows shell, it doesn't matter, and lastly, for the programs, well, Windows' integrated picture viewer is colour aware, as I was surprised to discover. Its all there where it should be. You don't really care what your UI elements look like, but pictures and video you do care.