Netgear Nighthawk X8 R8500 AC5300 Router Brings Link Aggregation Mainstream

by Ganesh T S on December 31, 2015 8:00 AM EST- Posted in

- Networking

- NetGear

- Broadcom

- 802.11ac

- router

Link Aggregation in Action

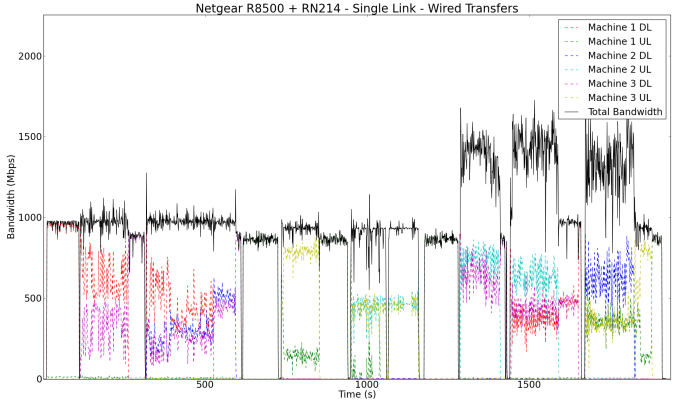

In order to get an idea of how link aggregation really helps, we first set up the NAS with just a single active network link. The first set of tests downloads the Blu-ray folder from the NAS starting with the PC connected to port 3, followed by simultaneous download of two different copies of the content from the NAS to the PCs connected to ports 3 and 4. The same concept is extended to three simultaneous downloads via ports 3, 4 and 5. A similar set of tests is run to evaluate the uplink part (i.e, data moves from the PCs to the NAS). The final set of tests involve simultaneous upload and download activities from the different PCs in the setup.

The upload and download speeds of the wired NICs on the PCs were monitored and graphed. This gives an idea of the maximum possible throughput from the NAS's viewpoint and also enables us to check if link aggregation works as intended.

The above graph shows that the download and upload links are limited to under 1 Gbps (taking into account the transfer inefficiencies introduced by various small files in the folder). However, the full duplex 1 Gbps nature of the NAS network link enables greater than 1 Gbps throughput when handling simultaneous uploads and downloads.

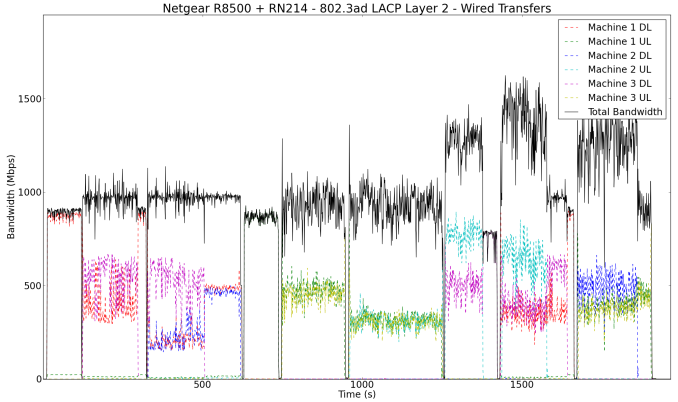

In our second wired experiment, we teamed the ports on the NAS with the default options (other than explicitly changing the teaming type to 802.3ad LACP). This left the hash type at Layer 2. Running our transfer experiments showed that there was no improvement over the single link results from the previous test.

In our test setup / configuration, Layer 2 as the transmit hash policy turned out to be ineffective. Readers interested in understanding more about the transmit hash policies which determine the distribution of traffic across the different physical ports in a team should peruse the Linux kernel documentation here (search for the description of the parameter 'xmit_hash_policy').

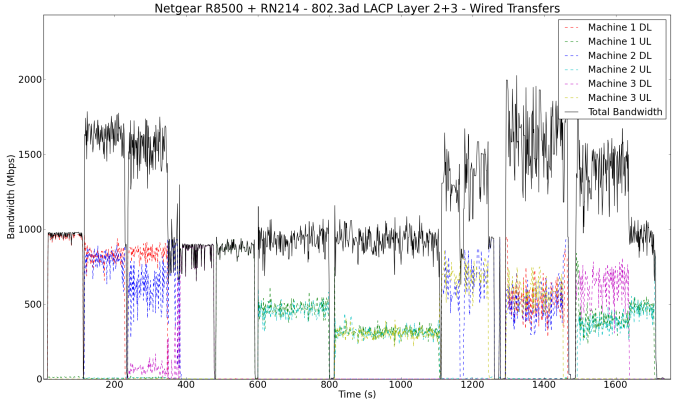

After altering the hash policy to Layer 2 + 3 in the ReadyNAS UI, the effectiveness of link aggregation became evident.

In the pure download case with two PCs, we can see each of the PCs getting around 800 Mbps (implying that the NAS was sending out data on both the physical NICs in the team). An interesting aspect to note in the pure download case with three PCs is that Machine 1 (connected to port 3) manages the same 800 Mbps as in the previous case, but the download rates on Machines 2 and 3 (connected to ports 4 and 5) add up to a similar amount. This shows that the the network ports 4 and 5 are bottlenecked by a 1 Gbps connection to the switch chip handling the link aggregation ports. This is also the reason why Netgear suggests using port 3 as one of the ports for handling the data transfer to/from the link aggregated ports. Simultaneous uploads and downloads also show some improvement, but the pure upload case is not any different from the single link case. These could be attributed to the limitations of the NAS itself. Note that we are using real-world data streams transferred using the OS file copy tools (and not artificial benchmarking programs) in these experiments.

66 Comments

View All Comments

blaktron - Thursday, December 31, 2015 - link

Using LACP direct from router to NAS is such a damn waste. If you put an enterprise switch in the middle of that you would get more than a 2x latency improvement to both your internet and files when using multiple clients on the network.Right now I have a 3 channel LACP to my vHost and a 2 channel to my router with a Procurve in the middle and its incredible how low latency you get on requests compared to single channel setups.

cdillon - Thursday, December 31, 2015 - link

LACP is going to do absolutely nothing for you in regards to a noticeable latency decrease. The link bit-time is still exactly the same as without LACP, you only gain parallelism. Even if it did decrease your already sub-millisecond LAN latency, that would amount to squat when your internet connection already has at least a few milliseconds of latency. You might go from 15.1 ms to 15.05 ms... again, that's only if it did anything for you at all.sor - Thursday, December 31, 2015 - link

I think what he means is that you'd want wired clients for the NAS, instead of forcing all access through the wireless router. At least, that's the only way I can think of to make his comment remotely sensible.cdillon - Thursday, December 31, 2015 - link

He mentions having LACP to the *router*.sor - Friday, January 1, 2016 - link

Right, and he says to put a switch between them to serve other clients. This device is a router and wireless hub.blaktron - Friday, January 1, 2016 - link

Yeah, so you get a latency decrease by serving two packets at a time. You get the biggest benefit from using srv-io and multiple vm servers off a lacp array. The setup described above still has a single channel bottleneck unless there's an internal switch attaching the wireless module by multiple channels, which there is likely not.joenathan - Saturday, January 2, 2016 - link

That isn't how latency works. Two packets at once would be throughput.lowtolerance - Sunday, January 3, 2016 - link

Latency has nothing to do with how many packets you send at once. It doesn't matter if it's sending one packet, two packets or fifty at a time. That's not to say the net result isn't a decrease in transfer time, but the RTT is going to be the same.FaaR - Saturday, January 2, 2016 - link

As gigabit ethernet is already full duplex, I fail to see how adding additional ethernet cables to a setup would reduce latency to any significant degree, unless your environment was previously suffering from a performance issue of some kind... :) *shrug* Maybe I am missing something here?TexelTech - Saturday, January 2, 2016 - link

I dont believe it has anything to do with latency, more like throughput.