AMD Ryzen 5 3600 Review: Why Is This Amazon's Best Selling CPU?

by Dr. Ian Cutress on May 18, 2020 9:00 AM ESTTurbo, Power, and Latency

Turbo

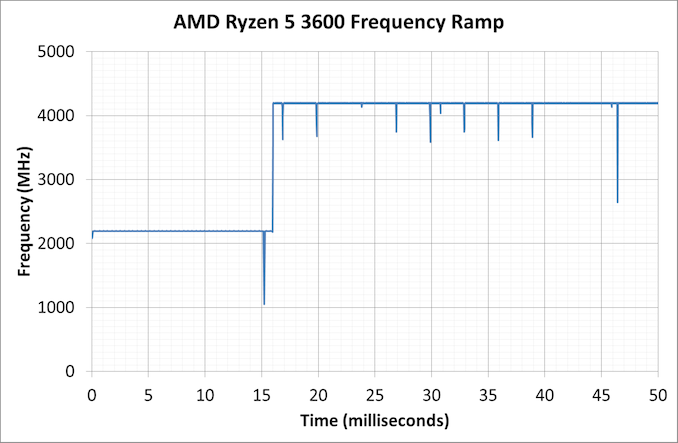

As part of our usual test suite, we run a set of code designed to measure the time taken for the processor to ramp up in frequency. Recently both AMD and Intel are promoting features new to their processors about how quickly they can go from an active idle state into a turbo state – where previously we were talking about significant fractions of a second, we are now down to milliseconds or individual frames. Managing how quickly the processor fires up to a turbo frequency is also down to the silicon design, with sufficient frequency domains needing to be initialized up without causing any localised voltage or power issues. Part of this is also down to the OEM implantation of how the system responds to requests for high performance.

Our Ryzen 5 3600 jumped up from a 2.2 GHz high-performance idle all the way to 4.2 GHz in 16 milliseconds, which coincides exactly with a single frame on a 60 Hz display. This is right about where machines need to be in order to remain effective for a good user experience, assuming the rest of the system is up to scratch.

Power

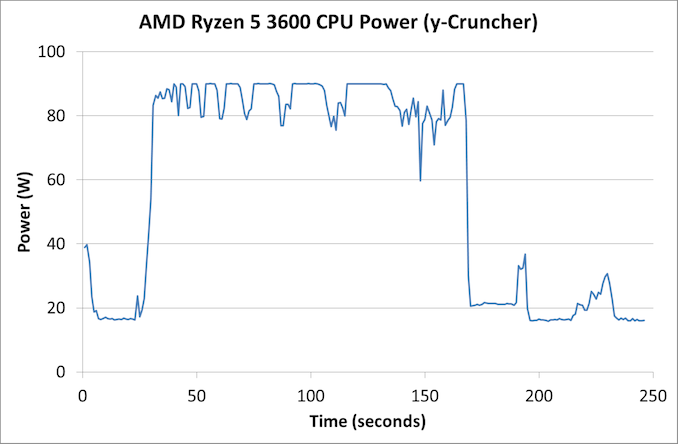

With the Ryzen 5 3600, AMD lists the official TDP of the processor as 65 W. AMD also runs a feature called Package Power Tracking, or PPT, which allows the processor to turbo where possible to a new power value – for 65 W processors that new value is 88 W. This takes into account the power delivery capabilities of the motherboard, as well as the thermal environment. The processor can then manage exactly what frequency to give to the system in 25 MHz increments.

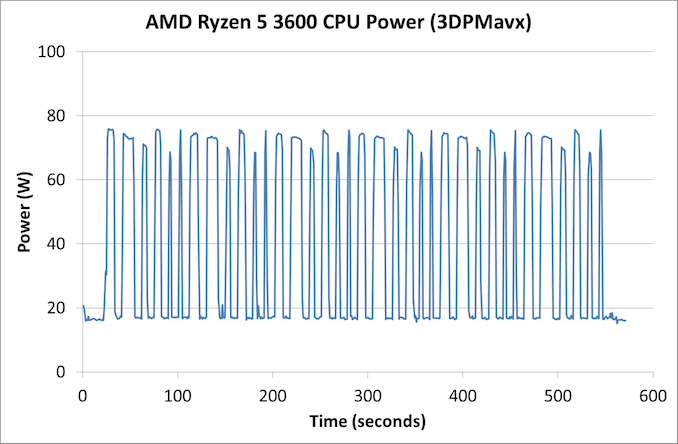

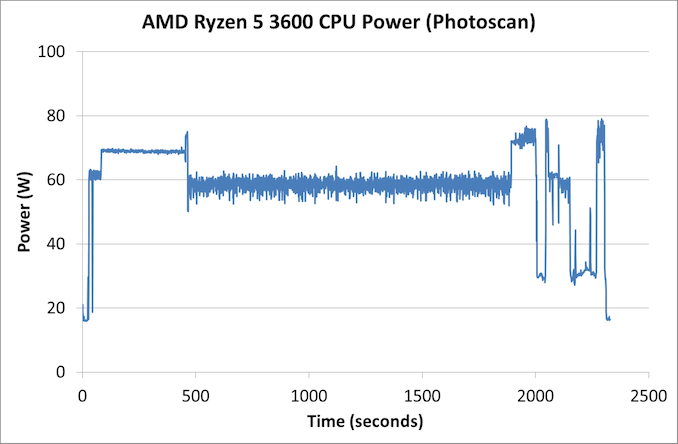

As part of my new test suite, we have a CPU power wrapper across several benchmarks to see the power response for a variety of different workloads.

For an AVX workload, y-Cruncher is somewhat periodic in its power use due to the way the calculation runs, but we see an almost constant 90 W peak power consumption through the whole test. The all-core turbo frequency here was in the 3875-3925 MHz range.

Our 3DPMavx test implements the highest version of AVX it can, for a series of six 10 second on, 10 second off tests, which then repeats. In this case we don’t see the processor going above 75 W in the whole process.

Photoscan is our more ‘regular’ test here, comprising of four stages each changing between single thread, multithread, and variable thread. We see peaks here up to 80 W, but the big variable threaded scenario bounces more around the 60 W mark for over 1000 seconds.

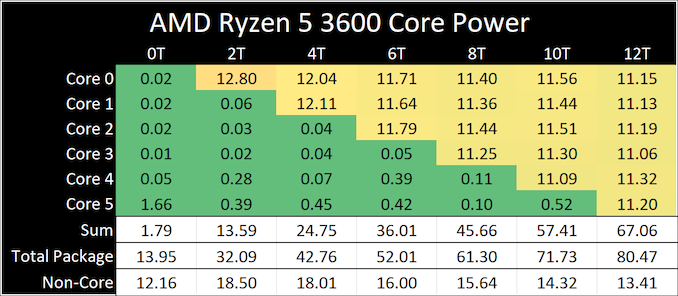

On the per-core power side, using our ray tracing power load, we see a small range of peak power values

When one thread is active, it sits at 12.8 W, but as we ramp up the cores, we get to 11.2 W per core. The non-core part of the processor, such as the IO chip, the DRAM channels and the PCIe lanes, even at idle still consume around 12-18 W in the system.

Latency

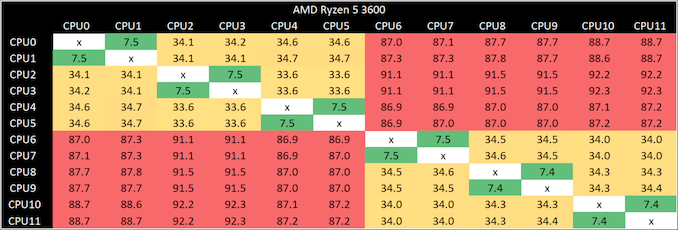

Our latency test is a simple core-to-core ping test, to detect any irregularities in the core design.

The results here are as expected.

- 7.5 nanoseconds for threads within a core

- 34 nanoseconds for cores within a CCX

- 87-91 nanoseconds between cores in different CCXes

114 Comments

View All Comments

Sonik7x - Monday, May 18, 2020 - link

Would be nice to see 1440p benchmarks across all games, also would be nice to see a comparison against an i7-5930K running which is also a 6c/12T CPUET - Monday, May 18, 2020 - link

Would be even nicer to see newer games. Anandtech reviews seem to be stuck in 2018, both for games and for apps, and that makes them a lot less relevant a read than they could be.Dolda2000 - Monday, May 18, 2020 - link

You exaggerate. The point of a benchmark suite can't really be to contain the specific workload you're going to put on the CPU (since that's extremely unlikely to be the case anyway), but to be representative of typical workloads, and I think the application selection here is quite adequate for that. In comparison, I find it much more important to have more comparison data in the Bench database. There may be a stronger case to be made for games, but I find even that slightly doubtful.MASSAMKULABOX - Saturday, May 23, 2020 - link

Not only that , but slightly older games are much more stable and have most of the performance ironed out. New games are getting patches and downloads all the time, which often affect perfomance. I want to see "E" versions I.e 35/45wThreeDee912 - Monday, May 18, 2020 - link

They already mentioned in the 3300X review they'll be going back and adding in new games like Borderlands 3 and Gears Tactics: https://www.anandtech.com/show/15774/the-amd-ryzen...flyingpants265 - Monday, May 18, 2020 - link

I haven't used AnandTech benchmarks for years. They don't use enough CPUs/GPUs, they never include enough results from the previous generations, which is the most important thing when considering upgrades and $ value.Also, the "bench" tool does not include enough tests or hardware.

jabber - Tuesday, May 19, 2020 - link

Yeah nothing annoys me more than Tech Site benchmarks that only compare the new stuff to hardware than came out 6 months before it. If I see say a new GPU tested I want to see how it compares to my RX480 (that a lot of us will be looking to upgrade this year) than just a 5700XT.johnthacker - Tuesday, May 19, 2020 - link

Eh, nothing annoys me more than Tech Site benchmarks that only compare the new stuff to other new stuff. If I have an existing GPU or CPU and I'm not sure if it's worth it for me to upgrade or stick with what I've got, I want to see how something new compares to my existing hardware so I can know whether it's worth upgrading or whether I might as well wait.Pewzor - Monday, May 18, 2020 - link

I mean Gamer's Nexus uses old games as well.Crazyeyeskillah - Tuesday, May 19, 2020 - link

Just to make this crystal clear, the reason they HAVE to use older games is because all of the PAST data has been run using those games. Most review sites only get sample hardware for a week or less to run the tests then return it in the mail. You literally wouldn't have anything to compare the data to if you only ran tests on the latest and greatest games and benchmarks.When I see people making this complaint I understand that they are new to computers and just want them to understand that there is a reason why benchmarks are limited. Most hardware review sites don't make any money, or if they do, it's enough to pay one or MAYBE two staff members (poorly.) Ad revenue is garbage due to add blockers on all your browsers, and legitimate sites that don't spam blickbaity rumors as news are shutting down. Just look what happened to Hardocp.com, one of the last true honest review sites.

The idea that hardware sites all have stockpiles of every system imaginable and the thousands of hours it would take to constantly setup and run all the new games and benchmarks is pretty comical.