The Intel Xeon W Review: W-2195, W-2155, W-2123, W-2104 and W-2102 Tested

by Ian Cutress & Joe Shields on July 30, 2018 1:00 PM EST- Posted in

- CPUs

- Intel

- Xeon

- Workstation

- ECC

- Skylake-SP

- Skylake-X

- Xeon-W

- Xeon Scalable

Benchmarking Performance: CPU Office Tests

The office programs we use for benchmarking aren't specific programs per-se, but industry standard tests that hold weight with professionals. The goal of these tests is to use an array of software and techniques that a typical office user might encounter, such as video conferencing, document editing, architectural modelling, and so on and so forth.

All of our benchmark results can also be found in our benchmark engine, Bench.

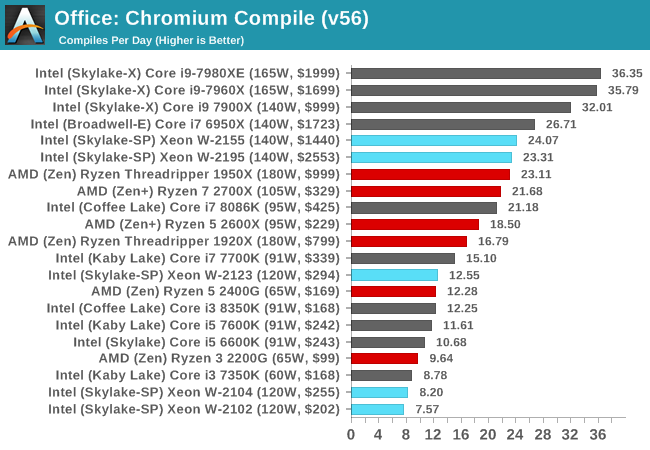

Chromium Compile (v56)

Our new compilation test uses Windows 10 Pro, VS Community 2015.3 with the Win10 SDK to combile a nightly build of Chromium. We've fixed the test for a build in late March 2017, and we run a fresh full compile in our test. Compilation is the typical example given of a variable threaded workload - some of the compile and linking is linear, whereas other parts are multithreaded.

Our popular Chrome Compile test gives a good showing for the Intel CPUs, however the higher-powered Core i9 processors perform a lot better here - up to 50% in fact. Part of this is down to memory; the DDR4-2666 C19 memory is slower than the DDR4-2666 C16 used in our Core i9 reviews. However, there might also be a case for power draw - the BIOS defaults for the Core i9 processors allow for a lot more power consumption, which the Xeon W processors might not be able to tap in to. It is worth noting that the W-2155 wins against the W-2195, showing that in this test frequency matters as much as cores.

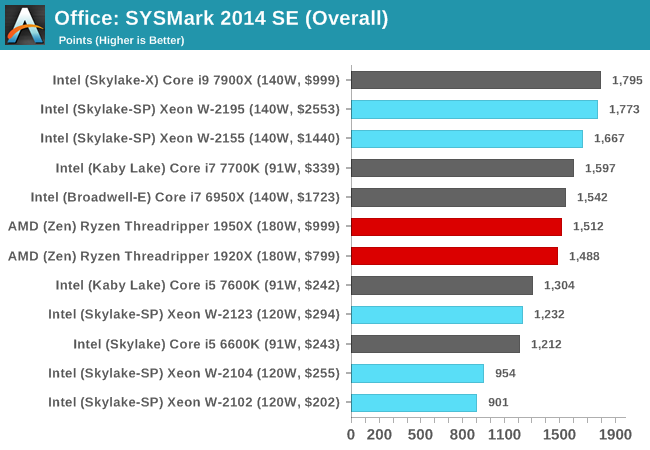

SYSmark 2014 SE: link

SYSmark is developed by Bapco, a consortium of industry CPU companies. The goal of SYSmark is to take stripped down versions of popular software, such as Photoshop and Onenote, and measure how long it takes to process certain tasks within that software. The end result is a score for each of the three segments (Office, Media, Data) as well as an overall score. Here a reference system (Core i3-6100, 4GB DDR3, 256GB SSD, Integrated HD 530 graphics) is used to provide a baseline score of 1000 in each test.

A note on context for these numbers. AMD left Bapco in the last two years, due to differences of opinion on how the benchmarking suites were chosen and AMD believed the tests are angled towards Intel processors and had optimizations to show bigger differences than what AMD felt was present. The following benchmarks are provided as data, but the conflict of opinion between the two companies on the validity of the benchmark is provided as context for the following numbers.

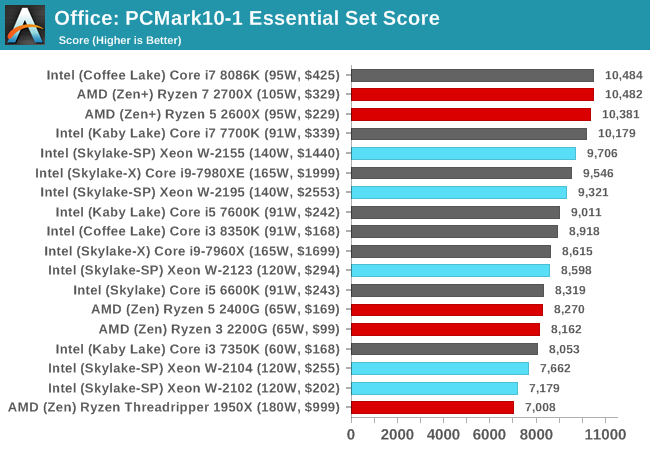

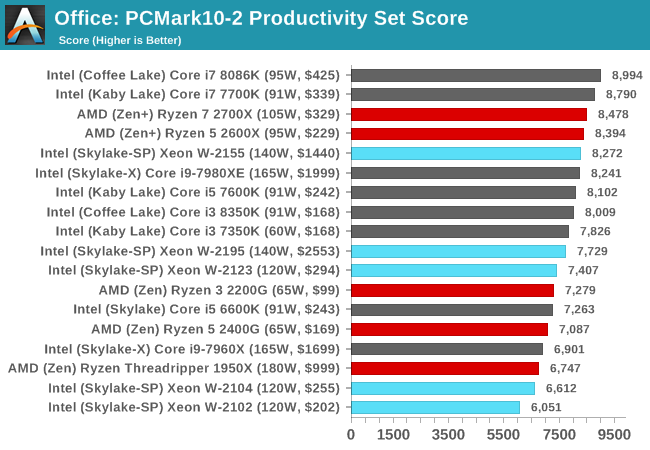

PCMark 10: link

PCMark 10 is the latest all-in-one office-related performance tool that combines a number of tests for low-to-mid office workloads, including some gaming, but focusing on aspects like document manipulation, response, and video conferencing.

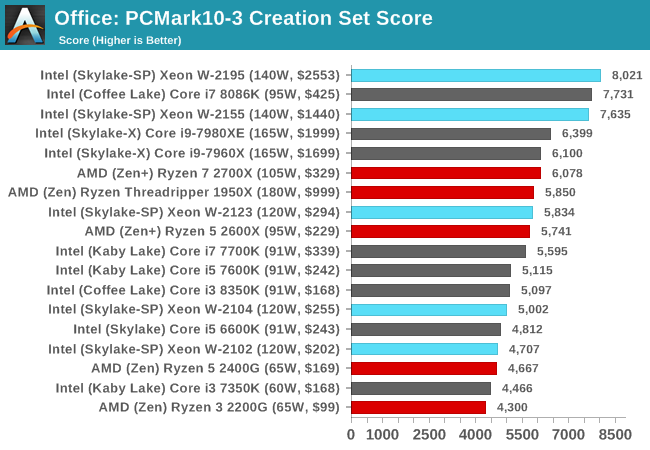

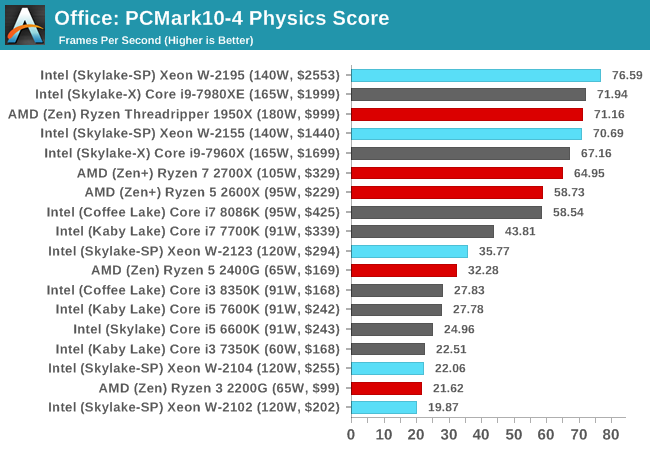

In the Physics score, the W-2195 takes a commanding lead, however the W-2155 is not far behind, offering a better performance per dollar metric. Both are outclassed by the Threadripper 1950X in this test, however. In fact, the only test where Xeon W truly wins is in the Creation test.

GeekBench4: link

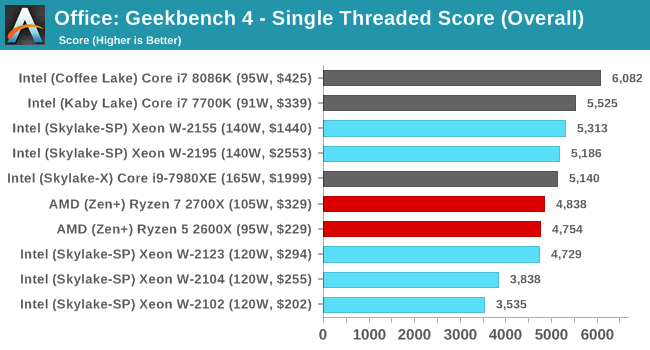

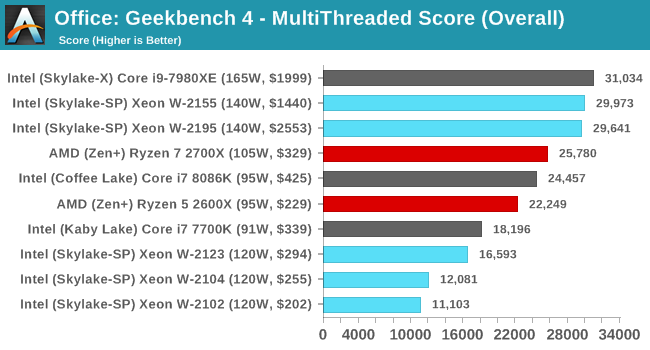

GB4 is a popular tool in benchmarking, with most users liking its cross-platform functionality. Due to requests, we are including the data in our reviews. Our benchmark database has a more detailed breakdown of the sub-sections in the test.

GeekBench 4 is still a newer benchmark in our test suite, hence the lack of comparative results.

PCMark8: link

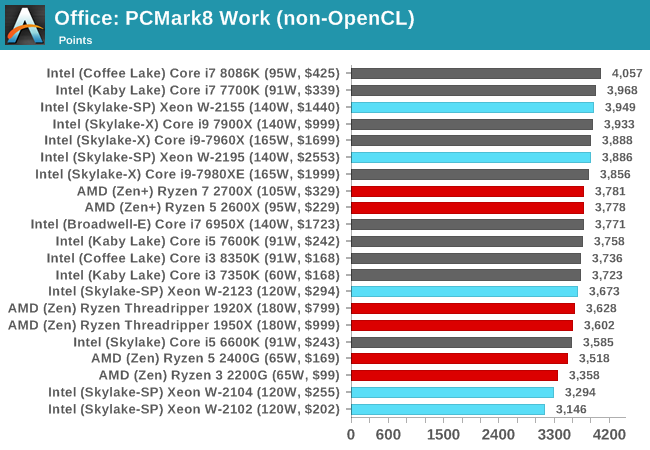

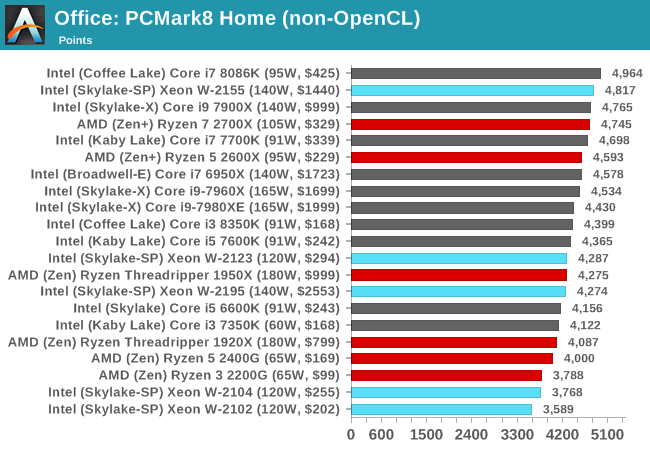

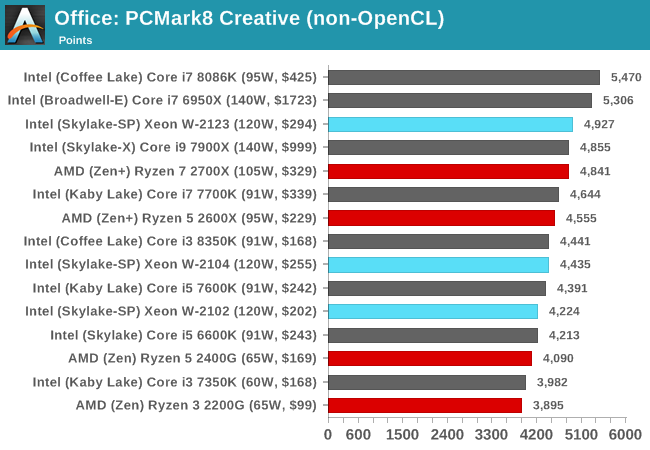

Despite originally coming out in 2008/2009, Futuremark has maintained PCMark8 to remain relevant in 2017. On the scale of complicated tasks, PCMark focuses more on the low-to-mid range of professional workloads, making it a good indicator for what people consider 'office' work. We run the benchmark from the commandline in 'conventional' mode, meaning C++ over OpenCL, to remove the graphics card from the equation and focus purely on the CPU. PCMark8 offers Home, Work and Creative workloads, with some software tests shared and others unique to each benchmark set.

[words]

74 Comments

View All Comments

HStewart - Monday, July 30, 2018 - link

I am curious why Xeon W for same core count is typically slower than Core X - also I notice the Scalable CPU have much more functionally especially related to reliability. In essence to keep the system running 24/7. Also the Scalable CPU's also appear to have 6 channel memory instead of 4 Channel memory. I wonder when 6 channel memory comes to consumer level CPUs.One test that would be is to see what same core processor for Xeon W vs the Scalar CPU with only one CPU.

Another test that could be interesting is a dual CPU scalable with say 2 12 cores verses 1 24 core of CPU on same level.

Just test to see what it with more cores vs CPU's

duploxxx - Monday, July 30, 2018 - link

one threadripper 2.0 and you can throw all intel configs here into the bintricomp - Monday, July 30, 2018 - link

YeaHHStewart - Monday, July 30, 2018 - link

I wish people keep the topic to the subject and not blab about competitor productsduploxxx - Tuesday, July 31, 2018 - link

if you would know anything about cpu scalable systems you would not ask these questions. a 2*12 vs 1*24 will be roughly 20% slower if your application scales cross the total core count due to in between socket communication. Even Intel provides data sheets on that. No need to test.as long as intel can screw consumers they will not invest anything, you wont get 6 mem lanes in xeon W or consumer unless competition does it and they get nailed. btw why on earth would you need that on a consumer platform?

BurntMyBacon - Tuesday, July 31, 2018 - link

If all things are equal, then what you say is true. There is a known performance drop due to intersocket communications. However, you may have more TDP headroom (depends on the chips you are using) and mostly likely more effective cooling with two sockets allowing for higher frequencies with the same number of active cores. If the workload doesn't require an abundance of socket to socket communications, then it is conceivable that the two socket solution may have merit is such circumstances.SanX - Tuesday, July 31, 2018 - link

Why ARM is just digging its buggers watching the game where it can beat Intel ? Where are ARM server and supercomputer chips? ARM processors soon surpass Intel in transistor count. And for the same amount of transistors ARM is 50-100x cheaper then duopoly Intel/AMD. As an additional advantage for ARM these two segments will soon completely abandon Microsoft.beggerking@yahoo.com - Thursday, August 2, 2018 - link

ARM is RISC which is completely from CISC so applications and os are limited. Microsoft server os has really evolved in every aspect in the last few years that may take RISC years to catch up on the software side.JoJ - Saturday, August 4, 2018 - link

ARM is Fujitsu's choice of successor core to SPARC64+, a architecture Fujitsu invested decades of research and development and testing to offer both commercially and at a national laboratory supercomputing level. ARM is therefore not a knee jerk choice of direction for a very interesting super builder.Obviously you exaggerated a little bit, saying ARM is "50 - 100 times cheaper than AMD/Intel".

I wish I could shake my belief that pedantic literalism in Internet forums in general wasn't preventing broad discussion - we exaggerate in real life without any socially degrading effects, why not online?

OR ate your conversation parties sniffing that obviously -- any person who inadvertently speaks technically inaccurately despite forming perfectly understandable inquiry... as if they are unwashed know nothings, and turning on their heels to end the discussion.....a bit like HN's "we don't tolerate humor here" reactions to innocent attempts at lightening the thread...

but I digress, my point here is your comment above raised a couple of interesting questions, that I feel haven't been answered only because I think readers by themselves first over react to hyperbole, then infil the accepted wisdom to answer your questions, despite you ask about pertinent value critical concerns. I feel that by supplying the answer and dismissing the comment as uninformed, the most important thing happening is the reader voluntarily self reinforcing given marketing positions, and not engaging with the subject at all. I work in advertisingand am actually studying this, because advertising buyers adore this kind of"mind share" but we think that is at odds with the advertising buyers wanting"open minded, engaging, adaptable, innovative" customers.

1. have a look at Serve The Homes review of the Cavium ARM server generations. This architecture is definitely viable and competitive now in a increasing number of application areas.

2. Microsoft Azure has ARM deployed in my estimation at scale second only to Baidu. I am tempted to think it's actually politics that prevents a ARM Azure server machine offering to commercial users, little else. The problem with Microsoft, is user expectation of a all round performance consistency and intel and Microsoft have been working on that smooth delivery for decades.

3. ARM is bit cheaper if you need to do more than a quick recompile with a few architecture options selected.

re when we will see a Azure ARM instance, I think could even be waiting for the ability for Cavium to actually deliver hardware, because unmet demand is a fatal blow to new technology, as well as successful realisation.

All my"quality time" with our server fleet, is spent all hands on the thermal and power profiles of our applications.

We will rewrite to gain fractions of a percentage point where it's a consistent number across runs. Since twenty five years ago, I crashed a colo cage by not considering the power on start surge of a huge half terabyte raid array, power loads obsessed me. Power usage in Cavium ARM looks like a winner for us.

4. BUT I said that,based on data mapping dense thermal sensor arrays, with the functional code paths of the actual application logic in flight across the fleet, at the time. If we're able to calculate the cost benefit of routing a new application function to a specific server, depending on the thermal load and core behaviour at the time of dispatch, I admit we're not very typical for a small scale customer. I think small is a server count below 10,000 here, including any peak on demand usage in case you're consumer retail and sell half price Gucci shoes on Black Monday.

(we got surprised by the reliability of gains from very crude information. Originally we just wanted to see if we could balance the flows in the hot aisle, and even throttle hotspot buildup if we lost some cooling locally. For Intel, we got lots of gains, by sending jobs to not exceed the optimal max turbo clock of a processor, and immediately filling out the slower cores with background chores. AMD and Cavium ARM are not as sophisticated about thermal management, where Intel is keen on overkill recently, eg four nigh identical Xeon Gold SKUs. Just do really read that STH review about this"redundancy of the Xeon processor parts- I came away with a purchase order for the reviewed SKU, because we're so excited about the power management system roles in production deployment, as a competitive advantage.

5. REAL COST ADVANTAGE DEPENDS ON CHANNEL PENETRATION, WITH AMD AT 2%, yes, TWO percent is considered healthy for them today, AMD need to be shipping in far greater volume, to move the money dial to realise the kind of cost advantage SanX is excited about.

Certification of countless applications is hardly begun...

I want to use a ARM workstation, to eat my dog food. This necessitates nvidia Quadro cards support. Yes, I write for a living. I target CUDA for a ever increasing proportion of customer needs. SURE I can just remote machines at will. BUT IF YOU DON'T GIVE CRITICAL DEVELOPERS TRULY GREAT HARDWARE, YOU'RE ABANDONING THE PLATFORM FOR ANY IDEA OF GENERAL DEPLOYMENT.

6. Probably the last sentence should have been standalone here.

I'll just say that we need a workstation as cool as the Silicon Graphics Indy of'93, to get a chance of getting a new GENERAL purpose platform in the mainstream soon.

7. I am constantly a both astounded by the simple fact that we have a chip that good to compete at all, yet scared because I am starting to wonder if we'll ever see sales above"bargaining power level" and platform insurance, and the niche market for companies able to extract whole value chains from controling their entire software ecosystem, something almost nobody in the real world can do.

JoJ - Saturday, August 4, 2018 - link

typo, mea,in point 3, I mean to say, "ARM is NOT cheaper, if you need to do more than a quick recompile.."