Microsoft's Xbox 360, Sony's PS3 - A Hardware Discussion

by Anand Lal Shimpi & Derek Wilson on June 24, 2005 4:05 AM EST- Posted in

- GPUs

PlayStation 3’s GPU: The NVIDIA RSX

We’ve mentioned countless times that the PlayStation 3 has the more PC-like GPU out of the two consoles we’re talking about here today, and after this week’s announcement, you now understand why.

The PlayStation 3’s RSX GPU shares the same “parent architecture” as the G70 (GeForce 7800 GTX), much in the same way that the GeForce 6600GT shares the same parent architecture as the GeForce 6800 Ultra. Sony isn’t ready to unveil exactly what is different between the RSX and the G70, but based on what’s been introduced already, as well as our conversations with NVIDIA, we can gather a few items.

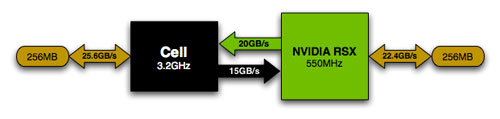

Despite the fact that the RSX comes from the same lineage as the G70, there are a number of changes to the core. The biggest change is that RSX supports rendering to both local and system memory, similar to NVIDIA’s Turbo Cache enabled GPUs. Obviously rendering to/from local memory is going to be a lot lower latency than sending a request to the Cell’s memory controller, so much of the architecture of the GPU has to be changed in order to accommodate this higher latency access to memory. Buffers and caches have to be made larger to keep the rendering pipelines full despite the higher latency memory access. If the chip is properly designed to hide this latency, then there is generally no performance sacrifice, only an increase in chip size thanks to the use of larger buffers and caches.

The RSX only has 60% of the local memory bandwidth of the G70, so in many cases it will most definitely have to share bandwidth with the CPU’s memory bus in order to achieve performance targets.

There is one peculiarity that hasn’t exactly been resolved, and that is about transistor counts. Both the G70 and the RSX share the same estimated transistor count, of approximately 300.4 million transistors. The RSX is built on a 90nm process, so in theory NVIDIA would be able to pack more onto the die without increasing chip size at all - but if the transistor counts are identical, that points to more similarity between the two cores than NVIDIA has led us to believe. So is the RSX nothing more than the G70? It’s highly unlikely that the GPUs are identical, especially considering that the sheer addition of Turbo Cache to the part would drive up transistor counts quite a bit. So how do we explain that the two GPUs are different, yet have the same transistor count and one is supposed to be more powerful than the other? There are a few possible options.

First and foremost, you have to keep in mind that these are not exact transistor counts - they are estimates. Transistor count is determined by looking at the number of gates in the design, and multiplying that number by the average number of transistors used per gate. So the final transistor count won’t be exact, but it will be close enough to reality. Remember that these chips are computer designed and produced, so it’s not like someone is counting each and every transistor by hand as they go into the chip.

So it is possible that NVIDIA’s estimates are slightly off for the two GPUs, but at approximately 10 million transistors per pixel pipe, it doesn’t seem very likely that the RSX will feature more than the 24 pixel rendering pipelines of the GeForce 7800 GTX, yet NVIDIA claims it is more powerful than the GeForce 7800 GTX. But how can that be? There are a couple of options:

The most likely explanation is attributed to nothing more than clock speed. Remember that the RSX, being built on a 90nm process, is supposed to be running at 550MHz - a 28% increase in core clock speed from the 110nm GeForce 7800 GTX. The clock speed increase alone will account for a good boost in GPU performancewhich would make the RSX “more powerful” than the G70.

There is one other possibility, one that is more far fetched but worth discussing nonetheless. NVIDIA could offer a chip that featured the same transistor count as the desktop G70, but with significantly more power if the RSX features no vertex shader pipes and instead used that die space to add additional pixel shading hardware.

Remember that the Cell host processor has an array of 7 SPEs that are very well suited for a number of non-branching tasks, including geometry processing. Also keep in mind that current games favor creating realism through more pixel operations rather than creating more geometry, so GPUs aren’t very vertex shader bound these days. Then, note that the RSX has a high bandwidth 35GB/s interface between the Cell processor and the GPU itself - definitely enough to place all vertex processing on the Cell processor itself, freeing up the RSX to exclusively handle pixel shader and ROP tasks. If this is indeed the case, then the RSX could very well have more than 24 pipelines and still have a similar transistor count to the G70, but if it isn’t, then it is highly unlikely that we’d see a GPU that looked much different than the G70.

The downside to the RSX using the Cell for all vertex processing is pretty significant. Remember that the RSX only has a 22.4GB/s link to its local memory bandwidth, which is less than 60% of the memory bandwidth of the GeForce 7800 GTX. In other words, it needs that additional memory bandwidth from the Cell’s memory controller to be able to handle more texture-bound games. If a good portion of the 15GB/s downstream link from the Cell processor is used for bandwidth between the Cell’s SPEs and the RSX, the GPU will be texture bandwidth limited in some situations, especially at resolutions as high as 1080p.

This option is much more far fetched of an explanation, but it is possible, only time will tell what the shipping configuration of the RSX will be.

93 Comments

View All Comments

Doormat - Friday, June 24, 2005 - link

@#22: Yes 1080P is an OFFICIAL ATSC spec. There are 18 different video formats in the ATSC specification. 1080/60P is one of them.FWIW, Even the first 1080P TVs coming out this year will *NOT* support 1080P in over HDMI. Why? I dunno. The TVs will upscale everything to 1080P (from 1080i, 720p, etc), but they cant accept input as 1080P. Some TVs will be able to do it over VGA (the Samsung HLR-xx68/78/88s will), but still thats not the highest quality input.

Pastuch - Friday, June 24, 2005 - link

RE: 1080P"We do think it was a mistake for Microsoft not to support 1080p, even if only supported by a handful of games/developers."

I couldnt disagree more. At the current rate of HDTV adoption we'll be lucky if half of the Xbox 360 users have 1280x720 displays by 2010. Think about how long it took for us to get passed 480i. Average Joe doesnt like to buy new TVs very often. Unless 1080P HDTVs drop to $400 or less no one will buy them for a console. We the eger geeks of Anandtech will obviously have 42 widescreen 1080P displays but we are far from the Average Joe.

RE: Adult Gamers

Anyone who thinks games are for kids needs a wakeup call. The largest player base of gamers is around 25 years old right now. By 2010 we will be daddys looking for our next source of interactive porn. I see mature sexually oriented gaming taking off around that time. I honestly believe that videogames will have the popularity of television in the next 20 years. I know a ton of people that dont have cable TV but they do have cable internet, a PC, xbox, PS2 and about a million games for each device.

Pannenkoek - Friday, June 24, 2005 - link

#19 fitten: That's the whole point, people pretend that even rotten fruit laying on the ground is "hard" to pick up. It's not simply about restructuring algorithms to accomodate massive parallelism, but also how it will take ages and how no current game could be patched to run multithreaded on a mere dual core system.Taking advantage of parallism is a hot topic in computer science as far as I can tell and there are undoubtedly many interesting challanges involved. But that's no excuse for not being able to simply multithread a simple application.

And before people cry that game engines are comparable to rocket science (pointing to John Carmack's endeavours) and are the bleeding edge technology in software, I'll say that's simply not the reality, and even less an excuse to not be able to take advantage of parallelism.

Indeed, game developers are not making that excuse and will come with multithreaded games once we have enough dual core processors and when their new games stop being videocard limited. Only Anandtech thinks that multithreading is a serious technical hurdle.

This and those bloody obnoxious "sponsored links" all through the text of articles are the only serious objections I have towards Anandtech.

jotch - Friday, June 24, 2005 - link

#26 - yeah i know that happens all over but I was just commenting on the fact that the console's market is mainly teens and adults not mainly kids.expletive - Friday, June 24, 2005 - link

"If you’re wondering whether or not there is a tangible image quality difference between 1080p and 720p, think about it this way - 1920 x 1080 looks better on a monitor than 1280 x 720, now imagine that blown up to a 36 - 60” HDTV - the difference will be noticeable. "This statement should be further qualified. There is only a tangible benefit to 1080p if the display device is native 1080p resolution. Otherwise, the display itself will scale the image down to its native resolution (i.e. 720p for most DLP televisions). If youre display is native 720p then youre better off outputting 720p becuase all that extra processing is being wasted.

There are only a handful of TVs that support native 1080p right now and they are all over $5k.

These points are really important when discussing the real-world applications of 1080p for a game console. The people using this type of device (a $300 game console) are very different then those that go out and buy 7800GTX cards the first week they are released. Based on my reading in the home theater space, less than 10% of the people that own a PS3 will be able to display 1080p natively during its lifecycle (5 years).

Also, can someone explain how the Xenos unified shaders was distelled from 48 down to 24 in this article? That didnt quite make sense to me...

John

nserra - Friday, June 24, 2005 - link

I was on the supermarket, and there was a kid (12year old girl) buying the game that you mention with the daddy that know sh*t about games, and about looking for the 18 year old logo.Maybe if they put a pen*s on the box instead of the carton girl, some dads will then know the difference between a game for 8 year old and an 18.

#21 I don’t know about your country, but this is what happen in mine and not only with games.

knitecrow - Friday, June 24, 2005 - link

would you be able to tell the difference at Standard resolution?instead of drawing more pixels on the screen, the revolution can use that processing power and/or die space for other functions... e.g. shaders

If the revolution opts to pick an out-of-order processor, something like PPC970FX, i don't see why i can't be competitive.

But seriously, all speculation aside, the small form factor limits the ammount of heat components can put out, and the processing power of the system.

perseus3d - Friday, June 24, 2005 - link

--"Sony appears to have the most forward-looking set of outputs on the PlayStation 3, featuring two HDMI video outputs. There is no explicit support for DVI, but creating a HDMI-to-DVI adapter isn’t too hard to do. Microsoft has unfortunately only committed to offering component or VGA outputs for HD resolutions."--Does that mean, as it stands now, the PS3 will require an adapter to be played on an LCD Monitor, and the X360 won't be able to be used with an LCD Monitor with DVI?

Dukemaster - Friday, June 24, 2005 - link

At least we know Nintendo's Revolution is the lozer when it comes to pure power.freebst - Friday, June 24, 2005 - link

I just wanted to remind everyone that 1080P at 60 Frames isn't even an approved ATSC Signal. 1080P at 30 and 24 frames is, but not 60. 1280x720 can run at 60, 30, and 24 that is unless you are running at 50 or 25 frames/sec in Europe.