The 2020 Mac Mini Unleashed: Putting Apple Silicon M1 To The Test

by Andrei Frumusanu on November 17, 2020 9:00 AM ESTSection by Ryan Smith

M1 GPU Performance: Integrated King, Discrete Rival

While the bulk of the focus from the switch to Apple’s chips is on the CPU cores, and for good reason – changing the underlying CPU architecture if your computers is no trivial matter – the GPU aspects of the M1 are not to be ignored. Like their CPU cores, Apple has been developing their own GPU technology for a number of years now, and with the shift to Apple Silicon, those GPU designs are coming to the Mac for the very first time. And from a performance standpoint, it’s arguably an even bigger deal than Apple’s CPU.

Apple, of course, has long held a reputation for demanding better GPU performance than the average PC OEM. Whereas many of Intel’s partners were happy to ship systems with Intel UHD graphics and other baseline solutions even in some of their 15-inch laptops, Apple opted to ship a discrete GPU in their 15-inch MacBook Pro. And when they couldn’t fit a dGPU in the 13-inch model, they instead used Intel’s premium Iris GPU configurations with larger GPUs and an on-chip eDRAM cache, becoming one of the only regular customers for those more powerful chips.

So it’s been clear for some time now that Apple has long-wanted better GPU performance than what Intel offers by default. By switching to their own silicon, Apple finally gets to put their money where their mouth is, so to speak, by building a laptop SoC with all the GPU performance they’ve ever wanted.

Meanwhile, unlike the CPU side of this transition to Apple Silicon, the higher-level nature of graphics programming means that Apple isn’t nearly as reliant on devs to immediately prepare universal applications to take advantage of Apple’s GPU. To be sure, native CPU code is still going to produce better results since a workload that’s purely GPU-limited is almost unheard of, but the fact that existing Metal (and even OpenGL) code can be run on top of Apple’s GPU today means that it immediately benefits all games and other GPU-bound workloads.

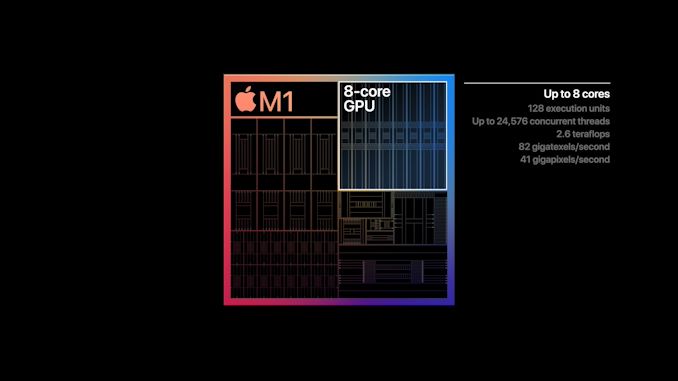

As for the M1 SoC’s GPU, unsurprisingly it looks a lot like the GPU from the A14. Apple will have needed to tweak their design a bit to account for Mac sensibilities (e.g. various GPU texture and surface formats), but by and large the difference is abstracted away at the API level. Overall, with M1 being A14-but-bigger, Apple has scaled up their 4 core GPU design from that SoC to 8 cores for the M1. Unfortunately we have even less insight into GPU clockspeeds than we do CPU clockspeeds, so it’s not clear if Apple has increased those at all; but I would be a bit surprised if the GPU clocks haven’t at least gone up a small amount. Overall, A14’s 4 core GPU design was already quite potent by smartphone standards, so an 8 core design is even more so. M1’s integrated GPU isn’t just designed to outpace AMD and Intel’s integrated GPUs, but it’s designed to chase after discrete GPUs as well.

| A Educated Guess At Apple GPU Specifications | |||

| M1 | |||

| ALUs | 1024 (128 EUs/8 Cores) |

||

| Texture Units | 64 | ||

| ROPs | 32 | ||

| Peak Clock | 1278MHz | ||

| Throughput (FP32) | 2.6 TFLOPS | ||

| Memory Clock | LPDDR4X-4266 | ||

| Memory Bus Width | 128-bit (IMC) |

||

Finally, it should be noted that Apple is shipping two different GPU configurations for the M1. The Mac Mini and MacBook Pro get chips with all 8 GPU cores enabled. Meanwhile for the Macbook Air, it depends on the SKU: the entry-level model gets a 7-core configuration, while the higher-tier model gets 8 cores. This means the entry-level Air gets the weakest GPU on paper – trailing a full M1 by around 12% – but it will be interesting to see how the shut-off core influences thermal throttling on that passively-cooled laptop.

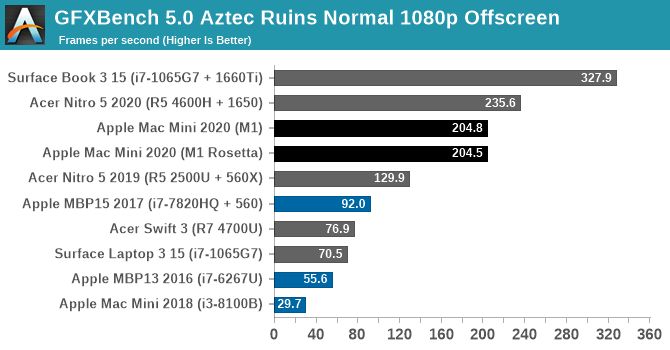

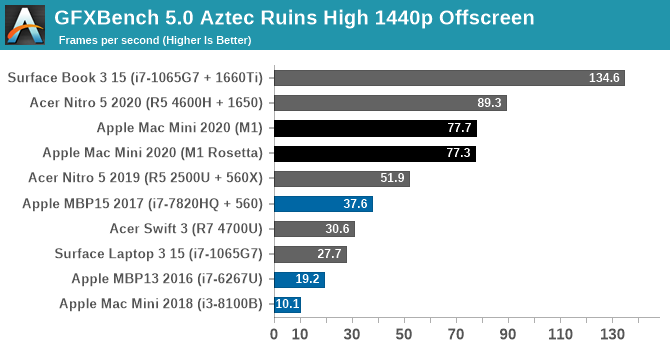

Kicking off our look at GPU performance, let’s start with GFXBench 5.0. This is one of our regular benchmarks for laptop reviews as well, so it gives us a good opportunity to compare the M1-based Mac Mini to a variety of other CPU/GPU combinations inside and outside the Mac ecosystem. Overall this isn’t an entirely fair test since the Mac Mini is a small desktop rather than a laptop, but as M1 is a laptop-focused chip, this at least gives us an idea of how M1 performs when it gets to put its best foot forward.

Overall, the M1’s GPU starts off very strong here. At both Normal and High settings it’s well ahead of any other integrated GPU, and even a discrete Radeon RX 560X. Only once we get to NVIDIA’s GTX 1650 and better does the M1 finally run out of gas.

The difference compared to the 2018 Intel Mac Mini is especially night-and-day. The Intel UHD graphics (Gen 9.5) GPU in that system is vastly outclassed to the point of near-absurdity, delivering a performance gain over 6x. And even other configurations such as the 13-inch MBP with Iris graphics, or a PC with a Ryzen 4700U (Vega 7 graphics) are all handily surpassed. In short, the M1 in the new Mac Mini is delivering discrete GPU-levels of performance.

As an aside, I also took the liberty of running the x86 version of the benchmark through Rosetta, in order to take a look at the performance penalty. In GFXBench Aztec Ruins, at least, there is none. GPU performance is all but identical with both the native binary and with binary translation.

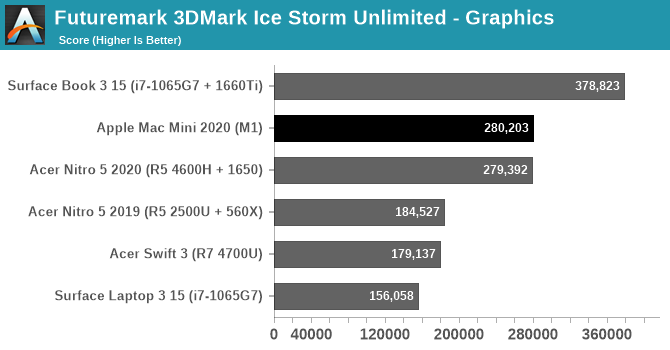

Taking one last quick look at the wider field with an utterly silly synthetic benchmark, we have 3DMark Ice Storm Unlimited. Thanks to the ability for Apple Silicon Macs to run iPhone/iPad applications, we’re able to run this benchmark on a Mac for the first time by running the iOS version. This is a very old benchmark, built for the OpenGL ES 2.0 era, but it’s interesting that it fares even better than GFXBench. The Mac Mini performs just well enough to slide past a GTX 1650 equipped laptop here, and while this won’t be a regular occurrence, it goes to show just how potent M1 can be.

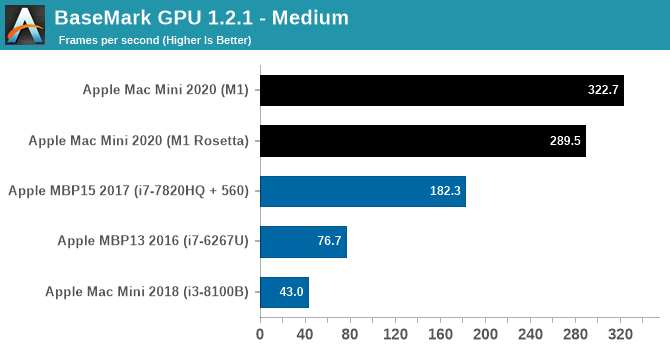

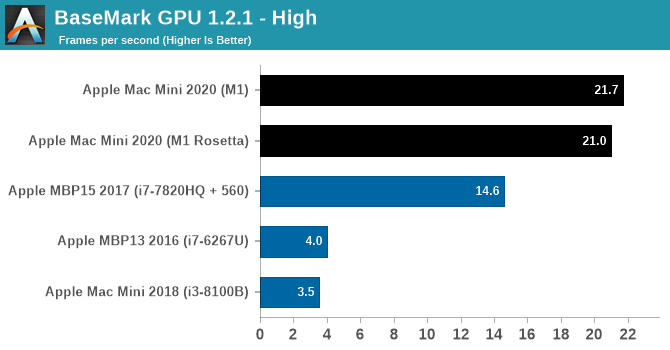

Another GPU benchmark that’s been updated for the launch of Apple’s new Macs is BaseMark GPU. This isn’t a regular benchmark for us, so we don’t have scores for other, non-Mac laptops on hand, but it gives us another look at how M1 compares to other Mac GPU offerings. The 2020 Mac Mini still leaves the 2018 Intel-based Mac Mini in the dust, and for that matter it’s at least 50% faster than the 2017 MacBook Pro with a Radeon Pro 560 as well. Newer MacBook Pros will do better, of course, but keep in mind that this is an integrated GPU with the entire chip drawing less power than just a MacBook Pro’s CPU, never mind the discrete GPU.

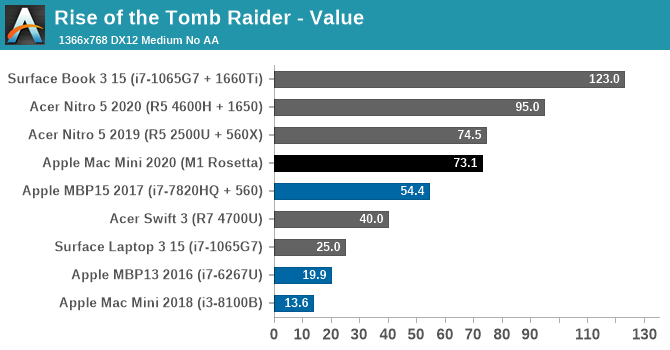

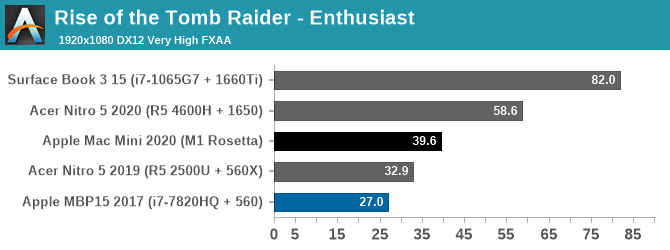

Finally, putting theory to practice, we have Rise of the Tomb Raider. Released in 2016, this game has a proper Mac port and a built-in benchmark, allowing us to look at the M1 in a gaming scenario and compare it to some other Windows laptops. This game is admittedly slightly older, but its performance requirements are a good match for the kind of performance the M1 is designed to offer. Finally, it should be noted that this is an x86 game – it hasn’t been ported over to Arm – so the CPU side of the game is running through Rosetta.

At our 768p Value settings, the Mac Mini is delivering well over 60fps here. Once again it’s vastly ahead of the 2018 Intel-based Mac Mini, as well as every other integrated GPU in this stack. Even the 15-inch MBP and its Radeon Pro 560 are still trailing the Mac Mini by over 25%, and it takes a Ryzen laptop with a Radeon 560X to finally pull even with the Mac Mini.

Meanwhile cranking things up to 1080p with Enthusiast settings finds that the M1-based Mac Mini is still delivering just shy of 40fps, and it’s now over 20% ahead of the aforementioned Ryzen + 560X system. This does leave the Mini well behind the GTX 1650 here – with Rosetta and general API inefficiencies likely playing a part – but it goes to show what it takes to beat Apple’s integrated GPU. At 39.6fps, the Mac Mini is quite playable at 1080p with good image quality settings, and it would be fairly easy to knock down either the resolution or image quality a bit to get that back above 60fps. All on an integrated GPU.

Update 11-17, 7pm: Since the publication of this article, we've been able to get access to the necessary tools to measure the power consumption of Apple's SoC at the package and core level. So I've gone back and captured power data for GFXBench Aztec Ruins at High, and Rise of the Tomb Raider at Enthusiast settings.

| Power Consumption - Mac Mini 2020 (M1) | ||||

| Rise of the Tomb Raider (Enthusiast) | GFXBench Aztec (High) |

|||

| Package Power | 16.5 Watts | 11.5 Watts | ||

| GPU Power | 7 Watts | 10 Watts | ||

| CPU Power | 7.5 Watts | 0.16 Watts | ||

| DRAM Power | 1.5 Watts | 0.75 Watts | ||

The two workloads are significantly different in what they're doing under the hood. Aztec is a synthetic test that's run offscreen in order to be as pure of a GPU test as possible. As a result it records the highest GPU power consumption – 10 Watts – but it also barely scratches the CPU cores virtually untouched (and for that matter other elements like the display controlller). Meanwhile Rise of the Tomb Raider is a workload from an actual game, and we can see that it's giving the entire SoC a workout. GPU power consumption hovers around 7 Watts, and while CPU power consumption is much more variable, it too tops out just a bit higher.

But regardless of the benchmark used, the end result is the same: the M1 SoC is delivering all of this performance at ultrabook-levels of power consumption. Delivering low-end discrete GPU performance in 10 Watts (or less) is a significant part of why M1 is so potent: it means Apple is able to give their small devices far more GPU horsepower than they (or PC OEMs) otherwise could.

Ultimately, these benchmarks are very solid proof that the M1’s integrated GPU is going to live up to Apple’s reputation for high-performing GPUs. The first Apple-built GPU for a Mac is significantly faster than any integrated GPU we’ve been able to get our hands on, and will no doubt set a new high bar for GPU performance in a laptop. Based on Apple’s own die shots, it’s clear that they spent a sizable portion of the M1’s die on the GPU and associated hardware, and the payoff is a GPU that can rival even low-end discrete GPUs. Given that the M1 is merely the baseline of things to come – Apple will need even more powerful GPUs for high-end laptops and their remaining desktops – it’s going to be very interesting to see what Apple and its developer ecosystem can do when the baseline GPU performance for even the cheapest Mac is this high.

682 Comments

View All Comments

Spunjji - Thursday, November 19, 2020 - link

Their 8-core Tiger won't come in under 45W.hanskey - Wednesday, November 18, 2020 - link

Yeah.Apple is generally only relevant to users with more money than sense historically, and this is why their PC market share is a joke and their tablet and phone market share are ever shrinking - because they are not competitively priced.

Generally speaking, Apple products are for computer-device users who are not very tech savvy and who do not seek the best total-cost-of-ownership for their performance requirements, easily swayed by "the cool factor", and who also don't mind vendor lock in, the lack of reasonable pricing for minor upgrades, expensive repairs, and giving control over to Apple to decide what software you get to use. These are all problems for the vast majority of computer device users, which in addition to being very overpriced are why long term Apple just gets less relevant with each passing year.

A nice CPU for some use-cases will not solve for any of that, I'm afraid. Don't get me wrong, I'm a CPU architecture nerd and they've impressively reached close to single-threaded feature parity with some of the neatest bits I can think of, but it remains to be seen if these cores can scale as they have been, but none of that matters like it would if VIA or a Chinese x86 manufacturer did they same on an open platform, because of Apple's terrible business practices.

Upsider - Wednesday, November 18, 2020 - link

Had to log in to thank you for this. I needed the laugh today!KoolAidMan1 - Wednesday, November 18, 2020 - link

People on day one are literally running 12k raw video files with no drops, beyond Apple's example of running 8k video with no stutter in DaVinci Resolve. Their bottom end chip is performing as well as or better than Intel-based systems that cost twice as much, but yeah, its for more people with money than sense.Cope.

blackcrayon - Wednesday, November 18, 2020 - link

"Generally speaking, Apple products are for computer-device users who are not very tech savvy"Must be why Google uses them. And that UNIX shell. Another non tech savvy user feature. The vast majority of desktop users use Windows PCs. And 99% of them design their own computers from scratch, then write software to run on top of it.

You should be ashamed of yourself.

Silver5urfer - Friday, November 20, 2020 - link

Googleers are the worst fools of Software development, they are chasing the same garbage Macbook system of Locked garbage and fake privacy features, the Android OS is rotting from inside out, they are shaking up fundamentals such as filesystem support to a sandboxed garbage Scoped Storage mess with SAF framework on top, their Chromebooks are utter garbage. The OS doesn't even have Native Apps it uses Android yet they don't have SD card access properly, the machine cannot boot into other OSes properly as well. The UI is a mess and it is made for the literal non tech savvy users just need a basic computer - Like Kids, that's why in US many schools got them and they were very cheap too.Coming to MS, same the Windows 10 UX is a mess because the garbage OS looks like a mobile OS first and then they are killing the information density to accomodate the touch screen system they could simply made 2 UX options on first boot but they don't and on top they kill Control Panel and more desktop centric system with the sub par Metro UI/Fluent system which puts lot of emphasis on the ugly design on top of the least productive workflow with the OS in terms of a Desktop OS. Their Surface Books are same trash like that they use Intel but the problem is they gate every single BIOS control with zero user tweak option and they added S mode and all sorts of garbage to block exe but it failed so backtracked on top. They are going in the same walled garden utopian approach of WaaS, As a Service unstable mess of an OS with every 6 months of hell with a beta for a damn Desktop OS and using Home version userbase as guniea pigs.

Google gets the prize since their Pixel was an iPhone clone from day 1, they aped the iPhone 6 design with Pixel made by HTC, and then advertised 3.5mm jack but axed it with Pixel 2 and with Pixel 3 that Insane notch got a copy paste to the 3XL with the world's worst notch ever, and then with Pixel 4 they yanked the HW so bad that it doesn't get proper battery missing ton of features, their Software is subpar beta and their Apps like Pixel recorder where one records the Sound one cannot even see them on the storage thanks to Scoped disaster storage they have to hit share that level of copying is going on at Google fools and then the best part, their marketshare since 5 generations - less than 3%, which is less than Huawei which was blocked by CFIUS, that share of Huawei was through Honor.

Ofc you are not even capable of understanding all of this but again say something like that you said to the OP. Keep it up inb4 others come and try, google Scoped Storage Commonsware and then if you understand then we can talk.

Spunjji - Monday, November 23, 2020 - link

Would love to see Silver5urfer's posts run through analysis software and compared to Quantumz0d. Even if they're not the same person, the overlap is fascinating.Spunjji - Thursday, November 19, 2020 - link

One of my most frugal developer friends codes on an ancient MacBook Air. But sure. 😂Sandbo - Sunday, November 22, 2020 - link

And the history has been history with M1, actually. You can try to show me a laptop with the performance of MacAir base model while maintaining the same battery life.RAM is one limit but for lots of people who only use the laptop for web browsing and maybe zoom, like students, this is the no brainer laptop to me.

Xanadu1977 - Wednesday, November 18, 2020 - link

It would interesting to see (if ever) and Arm based CPU could be paired with a discrete high end GPU from AMD or Nvidia like a 6800xt, 2060 super, 3070 or better.