The NVIDIA GeForce GTX 1080 Preview: A Look at What's to Come

by Ryan Smith on May 17, 2016 9:00 AM EST

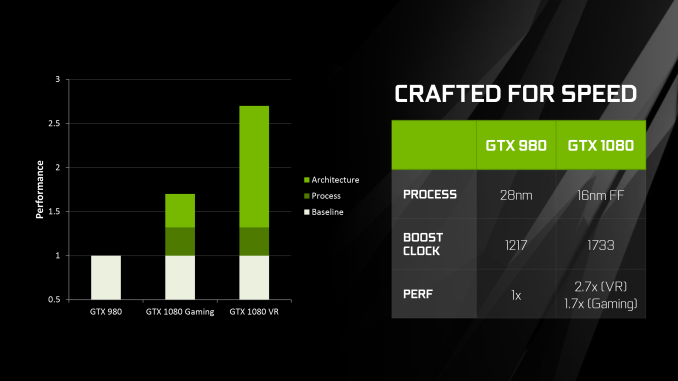

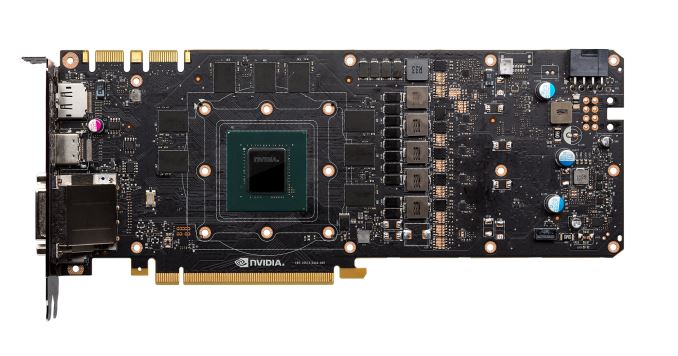

Earlier this month NVIDIA announced their latest generation flagship GeForce card, the GeForce GTX 1080. Based on their new Pascal architecture and built on TSMC’s 16nm FinFET process, the GTX 1080 is being launched as the first 16nm/14nm-based video card, and in time-honored fashion NVIDIA is starting at the high-end. The end result is that the GTX 1080 will be setting the new high mark for single-GPU performance.

Unlike past launches, NVIDIA is stretching out the launch of the GTX 1080 a bit more. After previously announcing it back on May 6th, the company is lifting their performance and architecture embargo today. Gamers however won’t be able to get their hands on the card until the 27th – next Friday – with pre-order sales starting this Friday. It is virtually guaranteed that the first batch of cards will sell out, but potential buyers will have a few days to mull over the data and decide if they want to throw down $699 for one of the first Founders Edition cards.

As for the AnandTech review, as I’ve only had a few days to work on the article, I’m going to hold it back rather than rush it out as a less thorough article. In the meantime however, as I know everyone is eager to see our take on performance, I wanted to take a quick look at the card and the numbers as a preview of what’s to come. Furthermore the entire performance dataset has been made available in the new GPU 2016 section of AnandTech Bench, for anyone who wants to see results at additional resolutions and settings.

Architecture

| NVIDIA GPU Specification Comparison | ||||||

| GTX 1080 | GTX 980 Ti | GTX 980 | GTX 780 | |||

| CUDA Cores | 2560 | 2816 | 2048 | 2304 | ||

| Texture Units | 160 | 176 | 128 | 192 | ||

| ROPs | 64 | 96 | 64 | 48 | ||

| Core Clock | 1607MHz | 1000MHz | 1126MHz | 863MHz | ||

| Boost Clock | 1733MHz | 1075MHz | 1216MHz | 900Mhz | ||

| TFLOPs (FMA) | 9 TFLOPs | 6 TFLOPs | 5 TFLOPs | 4.1 TFLOPs | ||

| Memory Clock | 10Gbps GDDR5X | 7Gbps GDDR5 | 7Gbps GDDR5 | 6Gbps GDDR5 | ||

| Memory Bus Width | 256-bit | 384-bit | 256-bit | 384-bit | ||

| VRAM | 8GB | 6GB | 4GB | 3GB | ||

| FP64 | 1/32 | 1/32 | 1/32 FP32 | 1/24 FP32 | ||

| TDP | 180W | 250W | 165W | 250W | ||

| GPU | GP104 | GM200 | GM204 | GK110 | ||

| Transistor Count | 7.2B | 8B | 5.2B | 7.1B | ||

| Manufacturing Process | TSMC 16nm | TSMC 28nm | TSMC 28nm | TSMC 28nm | ||

| Launch Date | 05/27/2016 | 06/01/2015 | 09/18/2014 | 05/23/2013 | ||

| Launch Price | MSRP: $599 Founders $699 |

$649 | $549 | $649 | ||

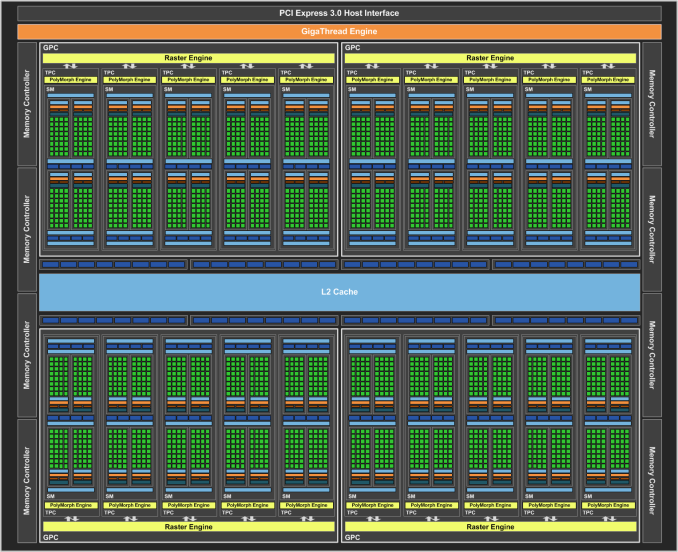

While I’ll get into architecture in much greater detail in the full article, at a high level the Pascal architecture (as implemented in GP104) is a mix of old and new; it’s not a revolution, but it’s an important refinement. Maxwell as an architecture was very successful for NVIDIA both at the consumer level and the professional level, and for the consumer iterations of Pascal, NVIDIA has not made any radical changes. The basic throughput of the architecture has not changed – the ALUs, texture units, ROPs, and caches all perform similar to how they did in GM2xx.

Consequently the performance aspects of consumer Pascal – we’ll ignore GP100 for the moment – are pretty easy to understand. NVIDIA’s focus on this generation has been on pouring on the clockspeed to push total compute throughput to 9 TFLOPs, and updating their memory subsystem to feed the beast that is GP104.

On the clockspeed front, a great deal of the gains come from the move to 16nm FinFET. The smaller process allows NVIDIA to design a 7.2B transistor chip at just 314mm2, while the use of FinFET transistors, though ultimately outright necessary for a process this small to avoid debilitating leakage, has a significant benefit to power consumption and the clockspeeds NVIDIA can get away with at practical levels of power consumption. To that end NVIDIA has sort of run with the idea of boosting clockspeeds, and relative to Maxwell they have done additional work at the chip design level to allow for higher clockspeeds at the necessary critical paths. All of this is coupled with energy efficiency optimizations at both the process and architectural level, in order to allow NVIDIA to hit these clockspeeds without blowing GTX 1080’s power budget.

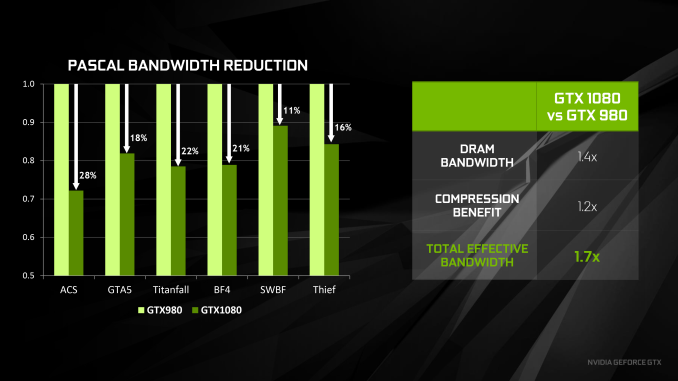

Meanwhile to feed GTX 1080, NVIDIA has made a pair of important changes to improve their effective memory bandwidth. The first of these is the inclusion of faster GDDR5X memory, which as implemented on GTX 1080 is capable of reaching 10Gb/sec/pin, a significant 43% jump in theoretical bandwidth over the 7Gb/sec/pin speeds offered by traditional GDDR5 on last-generation Maxwell products. Coupled with this is the latest iteration of NVIDIA’s delta color compression technology – now on its fourth generation – which sees NVIDIA once again expanding their pattern library to better compress frame buffers and render targets. NVIDIA’s figures put the effective memory bandwidth gain at 20%, or a roughly 17% reduction in memory bandwidth used thanks to the newer compression methods.

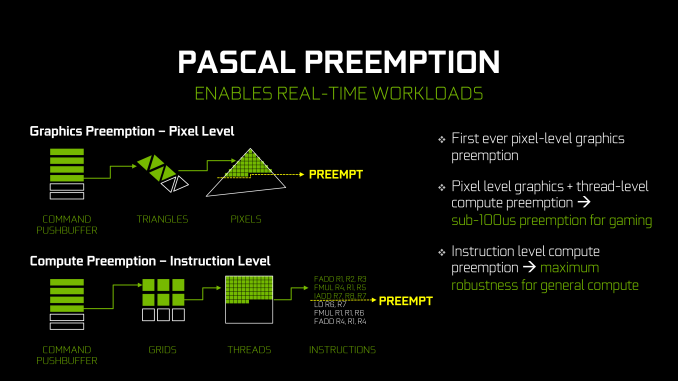

As for features included, we’ll touch upon that in a lot more detail in the full review. But while Pascal is not a massive overhaul of NVIDIA’s architecture, it’s not without its own feature additions. Pascal gains the ability to pre-empt graphics operations at the pixel (thread) level and compute operations at the instruction level, allowing for much faster context switching. And on the graphics side of matters, the architecture introduces a new geometry projection ability – Simultaneous Multi-Projection – and as a more minor update, gets bumped up to Conservative Rasterization Tier 2.

Looking at the raw specifications then, GTX 1080 does not disappoint. Though we’re looking at fewer CUDA cores than the GM200 based GTX 980 Ti or Titan, NVIDIA’s significant focus on clockspeed means that GP104’s 2560 CUDA cores are far more performant than a simple core count would suggest. The base clockspeed of 1607MHz is some 42% higher than GTX 980 (and 60% higher than GTX 980 Ti), and the 1733MHz boost clockspeed is a similar gain. On paper, GTX 1080 is set to offer 78% better performance than GTX 980, and 47% better performance than GTX 980 Ti. The real world gains are, of course, not quite this great, but they’re also relatively close to these numbers at times.

262 Comments

View All Comments

doggface - Tuesday, May 17, 2016 - link

The problem with low end amd cards atm is they lack features. Give us a $150-200 card with 4k 10bit h/w 265 decode, hdmi2, dp 1.4, etc and moderate gaming performance and it will sell. Give us great performance/cost and shitty features. Watch it sit on the shelf.Michael Bay - Wednesday, May 18, 2016 - link

Everything destroys GT730, extrapolating anything out of such comparisons is a wishful thinking at best.BrokenCrayons - Wednesday, May 18, 2016 - link

The 730 was a cheap upgrade I did to get a hotter running and far older GeForce 8800 GTS out of my system last year to take some load off the power supply (only 375 watts) so I could upgrade the CPU from a tired Xeon 3065 to a Q6600 without pushing too hard on the PSU. The only feature I really did bother with making sure I got was GDDR5 so the chip wasn't hamstrung by64-bit DDR3's bandwidth issues. The A10's iGPU would indeed make it look underpowered, but I'm not in the market for integrated graphics for my desktop. However, it's long overdue for a rebuild for which I'm gathering parts now. I would have considered an A10, but instead I just picked up an Athlon x4 and will carry the 730 forward onto the new motherboard for a little while until 16/14nm makes its way down the product stack into lower end cards. Since I plan to eventually purchase whatever new generation hardware is out on the smaller process node anyway, a CPU with an iGPU that ultimately ends up being unused doesn't make a lot of sense. In the short term future 730 should be fine for anything I do anyway since I have no reason to push higher resolutions or use any sort of spatial anti-aliasing. All of that doesn't really matter once the game's video and audio are rolled up in an h.264 stream and pushed across my network from my gaming box to my netbook where I ultimately end up playing any games on a low resolution screen anyway. I think something around a GTX950's performance would be perfectly fine for anything I need to do so I'm content to wait until I can get that performance for around $100 or less. Spending my fun money on a computer is a very low priority and I can always wait until later to get newer/faster hardware if I game I'm interested in playing doesn't run on my current PC. Such is the case with Fallout 4, but I won't bother with it until all of its DRM is out, there are patches that address most of its issues, and it's got a GOTY edition on discount through Steam for $20. By then, whatever I'm running will be more than fast enough to offer an enjoyable gaming experience without me struggling and grubbing around to find high end gear for it or divert money from seeing films, traveling, or dining out. I also don't have to bother overclocking, buying aftermarket cooling solutions, managing cables to optimize airflow, or any of that other garbage I used to deal with years ago...I don't know how many hours I spent playing IDE cable origami so those big ribbons wouldn't impede a case fan's air current over a heatsink so I could eek out one or two meaninglessly fewer degrees C on an unimportant component. Now, screw it, I put crap together once and forget about it for a few years, enjoying the fun it provides on the way because I finally figured out that the parts are just a means to obtain a few hours a week of recreation and not the ends themselves.paulemannsen - Thursday, May 19, 2016 - link

Man you really try thinking too hard just for Angry Birds.BrokenCrayons - Thursday, May 19, 2016 - link

I understand that the idea of someone playing casual games while also keeping tabs on computer hardware is somehow a really threatening concept, but don't let it cloud your thoughts too much that you assume that Angry Birds and Fallout 4 are mutually exclusive. You can be smarter and better than that if you try.lashek37 - Friday, May 20, 2016 - link

As soon as AMD comes out with their card, Nvidia will unleash the GTX1080 T.I ,lol.😂Yojimbo - Tuesday, May 17, 2016 - link

They won't have the middle of the market completely to themselves. They'll have the only new cards in the segment for 2 or 3 months. But during that time those cards will be competing with the 980 and 970. AMD, on the other hand, probably can't make much money selling Fury cards priced to compete with the 1070, and they'll have virtually nothing competing with the 1080, and that situation will last for 6 or more months. That's the reason AMD will be hurt, not because of "ignorant customers", as you claim.Yojimbo - Tuesday, May 17, 2016 - link

As an aside, if consumers were ignorant to choose new Maxwell cards over older AMD cards competing against them, why will they not similarly be ignorant to choose new Polaris cards over the older Maxwell cards competing with them?etre - Tuesday, May 24, 2016 - link

I fail to see how chosing old tech over new tech for a price difference of few euros is the smart thing to do.Everyone wants new tech, it's a psychological and practical factor.

As an example, where I'm living, in winter we can have -10 or -20 C but in summer is not uncommon to exceed 40C. For me power consumption is a factor. Less heat, less noise. The GTX line is well worth the money.

cheshirster - Tuesday, May 17, 2016 - link

Last time they went middle first was a big success (4870 vs GTX265).Don't see a problem for them if their P10 can touch 1070 perf for <400$