AMD Discusses 2016 Radeon Visual Technologies Roadmap

by Ryan Smith on December 8, 2015 9:00 AM EST- Posted in

- GPUs

- Displays

- AMD

- Radeon

- DisplayPort

- HDMI

- Radeon Technologies Group

This is something that initially caught me off-guard when I first realized it, but AMD historically hasn’t liked to talk about their GPU plans much in advance. On the CPU size we’ve heard about Carrizo and Zen years in advance. Meanwhile AMD’s competitor in the world of GPUs, NVIDIA, releases some basic architectural information over a year in advance as well. However with AMD’s GPU technology, we typically don’t hear about it until the first products implementing new technology are launched.

With AMD’s GPU assets having been reorganized under the Radeon Technologies Group (RTG) and led by Raja Koduri, RTG has recognized this as well. As a result, the new RTG is looking to chart a bit of a different course, to be a bit more transparent and a bit more forthcoming than they have in the past. The end result isn’t quite like what AMD has done with their CPU division or their competition has done with GPU architectures – RTG will talk about both more or less depending on the subject – but among several major shifts in appearance, development, and branding we’ve seen since the formation of the RTG, this is another way in which RTG is trying to set itself apart from AMD’s earlier GPU groups.

As part of AMD’s RTG technology summit, I had the chance to sit down and hear about RTG’s plans for their visual technologies (displays) group for 2016. Though RTG isn’t announcing any new architecture or chips at this time, the company has put together a roadmap for what they want to do with both hardware and software for the rest of 2015 and in to 2016. Much of what follows isn’t likely to surprise regular observers of the GPU world, but it none the less sets some clear expectations for what is in RTG’s future over much of the next year.

DisplayPort 1.3 & HDMI 2.0a: Support Coming In 2016

First and foremost then, let’s start with RTG’s hardware plans. As I mentioned before RTG isn’t announcing any new architectures, but they are announcing some of the features that the 2016 Radeon GPUs will support. Among these changes is a new display controller block, upgrading the display I/O functionality we’ve seen as the cornerstone of AMD’s GPU designs since GCN 1.1 was first launched in 2013.

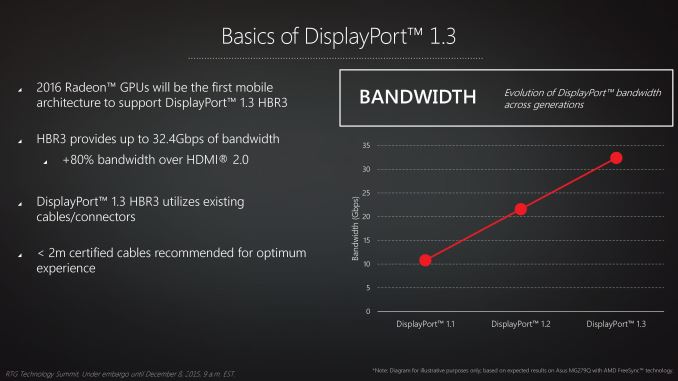

The first addition here is that RTG’s 2016 GPUs will be including support for DisplayPort 1.3. We’ve covered the announcement of DisplayPort 1.3 separately in the past, where in 2014 the VESA announced the release of the 1.3 standard. DisplayPort 1.3 will introduce a faster signaling mode for DisplayPort – High Bit Rate 3 (HBR3) – which in turn will allow DisplayPort 1.3 to offer 50% more bandwidth than the current DisplayPort 1.2 and HBR2, boosting DisplayPort’s bandwidth to 32.4 Gbps before overhead.

| DisplayPort Supported Resolutions | |||||||||||

| Standard | Max Resolution (RGB/4:4:4, 60Hz) |

Max Resolution (4:2:0, 60Hz) |

|||||||||

| DisplayPort 1.1 (HBR1) | 2560x1600 | N/A | |||||||||

| DisplayPort 1.2 (HBR2) | 3840x2160 | N/A | |||||||||

| DisplayPort 1.3 (HBR3) | 5120x2880 | 7680x4320 | |||||||||

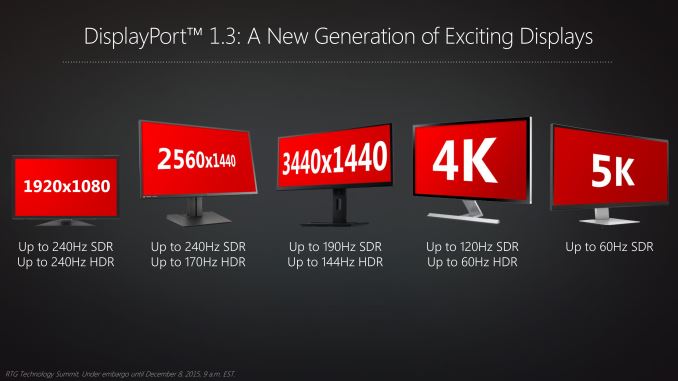

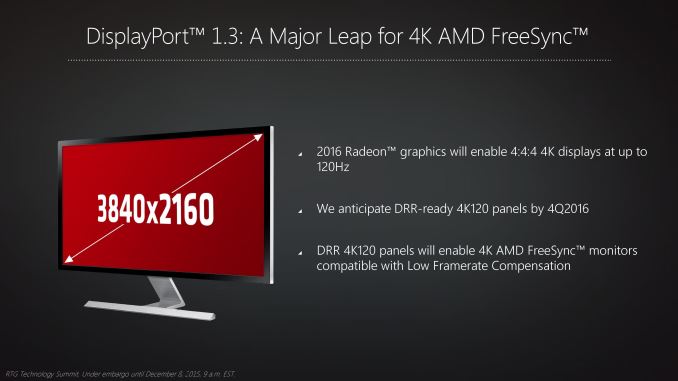

The purpose of DisplayPort 1.3 is to offer the additional bandwidth necessary to support higher resolution and higher refresh rate monitors than the 4K@60Hz limit of DP1.2. This includes supporting higher refresh rate 4K monitors (120Hz), 5K@60Hz monitors, and 4K@60Hz with higher color depths than 8 bit per channel color (necessary for a good HDR implementation). DisplayPort’s scalability via tiling has meant that some monitor configurations have been possible even via DP1.2 by utilizing MST over multiple cables, however with DP1.3 it will now be possible to support those configurations in a simpler SST configuration over a single cable.

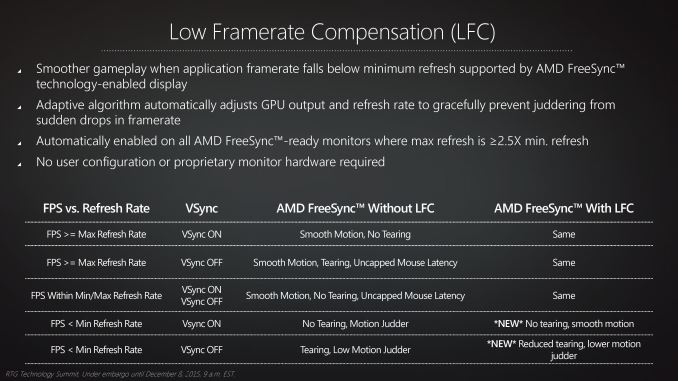

For RTG this is important on several levels. The first is very much pride – the company has always been the first GPU vendor to implement new DisplayPort standards. But at the same time DP1.3 is the cornerstone of multiple other efforts for the company. The additional bandwidth is necessary for the company’s HDR plans, and it’s also necessary to support the wider range of refresh rates at 4K necessary for RTG’s Freesync Low Framerate Compensation tech, which requires a 2.5x min:max ratio to function. That in turn has meant that while RTG has been able to apply LFC to 1080p and 1440p monitors today, they won’t be able to do so with 4K monitors until DP1.3 gives them the bandwidth necessary to support 75Hz+ operation.

Meanwhile DisplayPort 1.3 isn’t the only I/O standard planned for RTG’s 2016 GPUs. Also scheduled for 2016 is support for the HDMI 2.0a standard, the latest generation HDMI standard. HDMI 2.0 was launched in 2013 as an update to the HDMI standard, significantly increasing HDMI’s bandwidth to support 4Kp60 TVs, bringing it roughly on par with DisplayPort 1.2 in terms of total bandwidth. Along with the increase in bandwidth, HDMI 2.0/2.0a also introduced support for other new features in the HDMI specification such as the next-generation BT.2020 color space, 4:2:0 chroma sampling, and HDR video.

That HDMI has only recently caught up to DisplayPort 1.2 in bandwidth at a time when DisplayPort 1.3 is right around the corner is one of those consistent oddities in how the two standards are developed, but none the less this important for RTG. HDMI is not only the outright standard for TVs, but it’s the de facto standard for PC monitors as well; while you can find DisplayPort in many monitors, you would be hard pressed not to find HDMI. So as 4K monitors become increasingly cheap – and likely start dropping DisplayPort in the process – supporting HDMI 2.0 will be important for RTG for monitors just as much as it is for TVs.

Unfortunately for RTG, they’re playing a bit of catch-up here, as the HDMI 2.0 standard is already more than 2 years old and has been supported by NVIDIA since the Maxwell 2 architecture in 2014. Though they didn’t go into detail, I was told that AMD/RTG’s plans for HDMI 2.0 support were impacted by the cancelation of the company’s 20nm planar GPUs, and as a result HDMI 2.0 support was pushed back to the company’s 2016 GPUs. The one bit of good news here for RTG is that HDMI 2.0 is still a bit of a mess – not all HDMI 2.0 TVs actually support 4Kp60 with full chroma sampling (4:4:4) – but that is quickly changing.

99 Comments

View All Comments

Michael Bay - Thursday, December 10, 2015 - link

He`s drunk or crazy. Typical state for AMD user.RussianSensation - Wednesday, December 23, 2015 - link

It's actually correct. GCN-like implies Pascal will be more oriented towards GPGPU/compute functions -- i.e., graphics cards are moving towards general purpose processing devices that are good at performing various parallel tasks well. GCN is just a marketing name but the main thing about it is focus on compute + graphics functionality. NV is re-focusing its efforts heavily on compute with Pascal. For example, they are aiming to increase neural network performance by 10X.extide - Tuesday, December 8, 2015 - link

While nVidia picks a new name for each generation it's not like they are tossing the old design in the trash and building an entirely new GPU ... I would imagine we will see "GCN 2.0" next year, and I would be surprised if better power efficiency was not one of the main features.Refuge - Tuesday, December 8, 2015 - link

That has been their trend for the last two Architecture updates they've made. Granted small adjustments, but all in the name of power and efficiency.Jon Irenicus - Tuesday, December 8, 2015 - link

Apparently in maxwell they got that power efficiency by stripping out a lot of the hardware schedules amd still has in gcn, so the efficiency boost and power decrease was not "free." It will mean that maxwell cards are less capable of context switching for VR, and can't handle mixed graphics/compute workloads as well as gcn cards. That was fine with dx11 and it worked well for them, but I don't think those cards will age well at all. But that may have been part of the point.haukionkannel - Tuesday, December 8, 2015 - link

They only need to upgrade some part of GCN and they are just fine!The Nvidia did very good job in compression architecture of their GPU and that lead much better energy usage because they can use smaller (and cheaper) memory pathway. (There are other factors too, but that one is guite important)

AMD have higher bandwidth version of their 380, but the card does not benefit from it, so they are not releasing it, because 380 also have relative good compression build in. Make it better, increase ROPs and GCN is competitive again.

WaltC - Tuesday, December 8, 2015 - link

Odd, considering that nVidia is very much in catch-up mode presently concerning HBM deployment and even D3d12 hardware compliance...;) But, I don't do mobile at all, so I can't see it from that *cough* perspective...Michael Bay - Thursday, December 10, 2015 - link

HBM does not offer any real advantage to the enduser presently, so there is literally no catch-up on nV part. Same with DX12.Macpoedel - Tuesday, December 8, 2015 - link

Nvidia changes the name of their architecture for every little change they make, that doesn't mean AMD has to do so as well. GCN 1.0 and GCN 1.2 are almost as much apart as Maxwell 2 and Kepler are. GPU architectures haven't changed all that much since both companies stated using the 28nm node.Frenetic Pony - Tuesday, December 8, 2015 - link

Supposedly next year will bring GCN 2.0 Also it's already confirmed that there's basically no architectural improvements from Nvidia next year. Pascal is almost identical to Maxwell in most ways except for a handful of compute features.