The AMD Ryzen 7 5800X3D Review: 96 MB of L3 3D V-Cache Designed For Gamers

by Gavin Bonshor on June 30, 2022 8:00 AM EST- Posted in

- CPUs

- AMD

- DDR4

- AM4

- Ryzen

- V-Cache

- Ryzen 7 5800X3D

- Zen3

- 3D V-Cache

The level of competition in the desktop CPU market has rarely been as intensive as it has been over the last couple of years. When AMD brought its Ryzen processors to market, it forced Intel to reply, and both have consistently battled in multiple areas, including core count, IPC performance, frequency, and ultimate performance. The constant race to improve products, stay ahead of the competition, and meet customers' changing needs has also sent the two companies off of the beaten paths at times, developing even wilder technologies in search of that competitive edge.

In the case of AMD, one such development effort has culminated with 3D V-Cache packaging technology, which stacks a layer of L3 cache on top of the existing CCD's L3 cache. Owing to the fact that while additional cache is beneficial to performance, large quantities of SRAM are, well, large, AMD has been working on how to place more L3 cache on a CPU chiplet without blowing out the die size altogether. The end result of that has been the stacked V-Cache technology, which allows the additional cache to be separately fabbed and then carefully placed on top of a chip to be used as part of a processor.

For the consumer market, AMD's first V-Cache equipped product is the Ryzen 7 5800X3D. Pitched as the fastest gaming processor on the market today, AMD's unique chip offers eight cores/sixteen threads of processing power, and a whopping 96 MB of L3 cache onboard. Essentially building on top of the already established Ryzen 7 5800X processor, the aim from AMD is that the additional L3 cache on the 5800X3D will take gaming performance to the next level – all for around $100 more than the 5800X.

With AMD's new gaming chip in hand, we've put the Ryzen 7 5800X3D through CPU suite and gaming tests to see if it is as good as AMD claims it is.

AMD Ryzen 7 58003XD: Now With 3D V-Cache

Previously announced at CES 2022, the Ryzen 7 58003XD is probably the most interesting of all of its Ryzen based chips to launch since Zen debuted in 2017. The reason is that the Ryzen 7 5800X3D uses AMD's own 3D V-Cache packaging technology that essentially plants a 64 MB layer of L3 cache on top of the existing 32 MB of L3 cache that the Ryzen 7 5800X has.

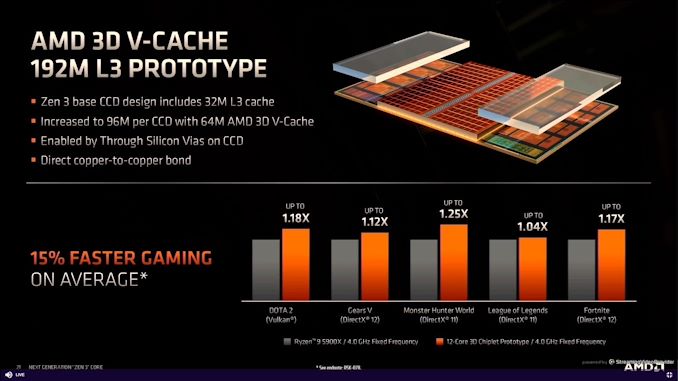

To outline the framework of the 3D V-Cache, AMD is using a direct copper-to-copper bonding process, with the additional layer of 64 MB L3 cache stacked on top of the existing 32 MB L3 cache on the die. AMD claims this increases gaming performance by 15% on average when comparing the Ryzen 9 5900X (12c/16t) to a 12-core 3D chiplet prototype chip. Whether AMD's claim is based solely on the 12-core design or if this level of performance increase is linear when using fewer cores is hard to determine.

It is clear that 3D V-Cache and its innovative bonding technique, which fuses additional L3 cache on top of existing L3 cache, is an interesting way to deliver solid performance gains, given how crucial L3 cache levels can be for specific game titles. AMD also claims that the large levels of L3 cache improve performance in multi-threaded workloads such as video encoding.

The design of the Vertical (V) Cache is based on the same TSMC 7 nm manufacturing process as the CCD, with a thinning process that is part of TSMC's technologies designed to negate any thermal complications that would arise. Bridging the gap between the 32 MB of on-die L3 cache and the vertically stacked 64 MB of L3 Cache is a base of structural silicon, with the direct copper to copper bonding and connected by silicon VIAs and TSVs.

Looking at where it positions itself in the stack, the Ryzen 7 5800X3D is unequivocally the same price as the Ryzen 9 5900X, which benefits from four additional Zen 3 cores, as well as eight additional threads. The Ryzen 7 5800X3D does have a lower base frequency than the Ryzen 7 5800X by 400 MHz, with a 200 MHz lower turbo frequency. This will likely be a power limiting factor as the additional L3 cache will generate power.

| AMD Ryzen 5000 Series Processors for Desktop (>$200) Zen 3 Microarchitecture (Non-Pro, 65W+) |

|||||||||

| AnandTech | Core/ Thread |

Base Freq |

1T Freq |

L3 Cache |

iGPU | PCIe | TDP | SEP | |

| Ryzen 9 5950X | 16 | 32 | 3400 | 4900 | 64 MB | - | 4.0 | 105 W | $590 |

| Ryzen 9 5900X | 12 | 24 | 3700 | 4800 | 64 MB | - | 4.0 | 105 W | $450 |

| Ryzen 9 5900 | 12 | 24 | 3000 | 4700 | 64 MB | - | 4.0 | 65 W | OEM |

| Ryzen 7 5800X3D | 8 | 16 | 3400 | 4500 | 96 MB | - | 4.0 | 105 W | $449 |

| Ryzen 7 5800X | 8 | 16 | 3800 | 4700 | 32 MB | - | 4.0 | 105 W | $350 |

| Ryzen 7 5800 | 8 | 16 | 3400 | 4600 | 32 MB | - | 4.0 | 65 W | OEM |

| Ryzen 7 5700X | 8 | 16 | 3400 | 4600 | 32 MB | - | 4.0 | 65 W | $299 |

| Ryzen 5 5600X | 6 | 12 | 3700 | 4600 | 32 MB | - | 4.0 | 65 W | $230 |

As the 3D V-Cache is primarily designed to improve performance in game titles, the new chip isn't too far from the Ryzen 7 5800X in regards to raw compute throughput. There will be a slight advantage to the Ryzen 7 5800X and Ryzen 9 5900X in this area with higher core frequencies on both models. Still, as I've previously mentioned, the real bread and butter will be in gaming performance or at least games that will benefit and utilize the extra levels of L3 cache.

AMD Ryzen 7 5800X3D: Overclocking Support for Memory, But not the Core

Although the Ryzen 7 5800X3D supports memory overclocking and allows users to overclock the Infinity Fabric interconnect to supplement this, AMD has disabled core overclocking, which makes it incompatible with AMD's Precision Boost Overclocking feature. This has disappointed a lot of users, but it is a trade-off associated with the 3D V-Cache.

Specifically, the limitations in overclocking come down to voltage limitations ( 1.35 V VCore) through the use of its packaging technology. The dense V-cache dies, it would seem, can't handle extra juice as well as the L3 cache already built into the Zen 3 chiplets.

As a result, in lieu of CPU overclocking, the biggest thing a user can do to influence higher performance with the Ryzen 7 5800X3D is to use faster DDR4 memory with lower latencies, such as a good DDR4-3600 kit. These settings are also the known sweet spot for AMD's Infinity Fabric Interconnect as set out by AMD.

Looking at the state of the desktop processor market as it is now, and by the end of the year, things look promising for users with plenty of choices available. The primary battle right now in gaming performance comes down to AMD's Ryzen 7 5800X3D ($450) and Intel's 12th Gen Core series options, with the Core i9-12900K leading the charge for team Intel.

Perhaps the most interesting debate is when it comes to buying a new processor, as both the current generational offerings from both AMD and Intel offer superb gaming performance on the whole. It's tough to select a mainstream desktop processor that doesn't work well with most graphics cards, and outside pairing up a flagship chip with a flagship video card, it will most likely come down to performance in compute, productivity, and content creation applications. We know that AMD is releasing its latest Zen 4 core later on this year, and we have come to expect advancements and progression in IPC performance.

The AMD Ryzen 7 5800X3D with its 3D V-Cache is new and exciting, and specifically for gaming performance, the battle for the title of 'fastest gaming processor' is ever-changing. Based on the existing AM4 platform, AMD has given users a leading-edge design in a familiar platform, but the biggest challenge will be in making true of AMD's claims, and that's what we aim to do in this review.

Finally, regardless of how the 5800X3D does today, AMD's stacked V-cache technology is not a one-and-done offering. AMD recently announced there will be a Zen 4 variation with 3D V-Cache at some point during the cycle, as well as announcing the same for Zen 5, which is expected in 2024.

For our testing, we are using the following:

| Ryzen Test System (DDR4) | |

| CPU | Ryzen 7 5800X3D ($450) 8 Cores, 16 Threads 105W TDP, 3.4 GHz Base, 4.5 GHz Turbo |

| Motherboard | ASUS ROG Crosshair VIII Extreme (X570) |

| Memory | ADATA 2x32 GB DDR4-3200 |

| Cooling | MSI Coreliquid 360mm AIO |

| Storage | Crucial MX300 1TB |

| Power Supply | Corsair HX850 |

| GPUs | NVIDIA RTX 2080 Ti, Driver 496.49 |

| Operating Systems | Windows 11 Up to Date |

For comparison, all other chips were run as tests listed in our benchmark database, Bench, on Windows 10 and 11 (for the more recent processors).

125 Comments

View All Comments

Gavin Bonshor - Thursday, June 30, 2022 - link

We test at JEDEC to compare apples to apples from previous reviews. The recommendation is on my personal experience and what AMD recommends.HarryVoyager - Thursday, June 30, 2022 - link

Having done an upgrade from a 5800X to a 5800X3D, one of the interesting things about the 5800X3D is that its largely RAM insensitive. You can get pretty much the same performance out of DDR4-2366 as you can 3600+.And its not that it is under-performing. The things that it beats the 5800X at, it still beats it at, even when the 5800X is running very fast low latency RAM.

The up shot is, if you're on an AM4 platform with stock ram, you actually get a lot of improvement from the 5800X3D in its favored applications

Lucky Stripes 99 - Saturday, July 2, 2022 - link

This is why I hope to see this extra cache come to the APU series. My 4650G is very RAM speed sensitive on the GPU side. Problem is, if you start spending a bunch of cash on faster system memory to boost GPU speeds, it doesn't take long before a discrete video card becomes the better choice.Oxford Guy - Saturday, July 2, 2022 - link

The better way to test is to use both the optimal RAM and the slow JEDEC RAM.sonofgodfrey - Thursday, June 30, 2022 - link

Wow, awhile since I looked at these gaming benchmarks. These FPS times are way past the point of "minimum" necessary. I submit two conclusions:1) At some point you just have to say the game is playable and just check that box.

2) The benchmarks need to reflect this result.

If I were doing these tests, I would probably just set a low limit for FPS and note how much (% wise) of the benchmark run was below that level. If it is 0%, then that CPU/GPU/whatever combination just gets a "pass", if not it gets a "fail" (and you could dig into the numbers to see how much it failed).

Based on this criteria, if I had to buy one of these processors for gaming, I would go with the least costly processor here, the i5-12600k. It does the job just fine, and I can spend the extra $210 on a better GPU/Memory/SSD.

(Note: I'm not buying one of these processors, I don't like Alder Lake for other reasons, and this is not an endorsement of Alder Lake)

lmcd - Thursday, June 30, 2022 - link

Part of the intrigue is that it can hit the minimums and 1% lows for smooth play with 120Hz/144Hz screens.hfm - Friday, July 1, 2022 - link

I agree. I'm using a 5600X + 3080 + 32GB dual channel dual rank and my 3080 is still the bottleneck most of the time at the resolution I play all my games in, 1440p@144Hzmode_13h - Saturday, July 2, 2022 - link

> These FPS times are way past the point of "minimum" necessary.You're missing the point. They test at low resolutions because those tend to be CPU-bound. This exaggerates the difference between different CPUs.

And the relevance of such testing is because future games will probably lean more heavily on the CPU than current games. So, even at higher resolutions, we should expect to see future game performance affected by one's choice of a CPU, today, to a greater extent than current games are.

So, in essence, what you're seeing is somewhat artificial, but that doesn't make it irrelevant.

> I would probably just set a low limit for FPS and

> note how much (% wise) of the benchmark run was below that level.

Good luck getting consensus on what represents a desirable framerate. I think the best bet is to measure mean + 99th percentile and then let people decide for themselves what's good enough.

sonofgodfrey - Tuesday, July 5, 2022 - link

>Good luck getting consensus on what represents a desirable framerate.You would need to do some research (blind A-B testing) to see what people can actually detect.

There are probably dozens of human factors PhD thesis about this in the last 20 years.

I suspect that anything above 60 Hz is going to be the limit for most people (after all, a majority of movies are still shot at 24 FPS).

>You're missing the point. They test at low resolutions because those tend to be CPU-bound. This exaggerates the difference between different CPUs.

I can see your logic, but what I see is this:

1) Low resolution test is CPU bound: At several hundred FPS on some of these tests they are not CPU bound, and the few percent difference is no real difference.

2) Predictor of future performance: Probably not. Future games if they are going to push the CPU will use a) even more GPU offloading (e.g. ray-tracing, physics modeling), b) use more CPUs in parallel, c) use instruction set additions that don't exist or are not available yet (AVX 512, AI accelleration). IOW, you're benchmark isn't measuring the right "thing", and you can't know what the right thing is until it happens.

mode_13h - Thursday, July 7, 2022 - link

> You would need to do some research (blind A-B testing) to see what people can actually detect.Obviously not going to happen, on a site like this. Furthermore, readers have their own opinions of what framerates they want and trying to convince them otherwise is probably a thankless errand.

> I suspect that anything above 60 Hz is going to be the limit for most people

> (after all, a majority of movies are still shot at 24 FPS).

I can tell you from personal experience this isn't true. But, it's also not an absolute. You can't divorce the refresh rate from other properties of the display, like whether the pixel illumination is fixed or strobed.

BTW, 24 fps movies look horrible to me. 24 fps is something they settled on way back when film was heavy, bulky, and expensive. And digital cinema cameras are quite likely used at higher framerates, if only so they can avoid judder when re-targeting to 30 or 60 Hz targets.

> At several hundred FPS on some of these tests they are not CPU bound,

When different CPUs produce different framerates with the same GPU, then you know the CPU is a limiting factor to some degree.

> and the few percent difference is no real difference.

The point of benchmarking is to quantify performance. If the difference is only a few percent, then so be it. We need data in order to tell us that. Without actually testing, we wouldn't know.

> Predictor of future performance: Probably not.

That's a pretty bold prediction. I say: do the testing, report the data, and let people decide for themselves whether they think they'll need more CPU headroom for future games.

> Future games if they are going to push the CPU will use

> a) even more GPU offloading (e.g. ray-tracing, physics modeling),

With the exception of ray-tracing, which can *only* be done on the GPU, then why do you think games aren't already offloading as much as possible to the GPU?

> b) use more CPUs in parallel

That starts to get a bit tricky, as you have increasing numbers of cores. The more you try to divide up the work involved in rendering a frame, the more overhead you incur. Contrast that to a CPU with faster single-thread performance, and you know all of that additional performance will end up reducing the CPU portion of frame preparation. So, as nice as parallelism is, there are practical challenges when trying to scale up realtime tasks to use ever increasing numbers of cores.

> c) use instruction set additions that don't exist or are not available yet (AVX 512, AI accelleration).

Okay, but if you're buying a CPU today that you want to use for several years, you need to decide which is best from the available choices. Even if future CPUs have those features and future games can use them, that doesn't help me while I'm still using the CPU I bought today. And games will continue to work on "legacy" CPUs for a long time.

> IOW, you're benchmark isn't measuring the right "thing",

> and you can't know what the right thing is until it happens.

Let's be clear: it's not *my* benchmark. I'm just a reader.

Also, video games aren't new and the gaming scene changes somewhat incrementally, especially given how many years it now takes to develop them. So, tests done today should have similar relevance in the next few years as what test from a few years ago would tell us about gaming performance today.

I'll grant you that it would be nice to have data to support this: if someone would re-benchmark modern games with older CPUs and compare the results from those benchmarks to ones takes when the CPUs first launched.