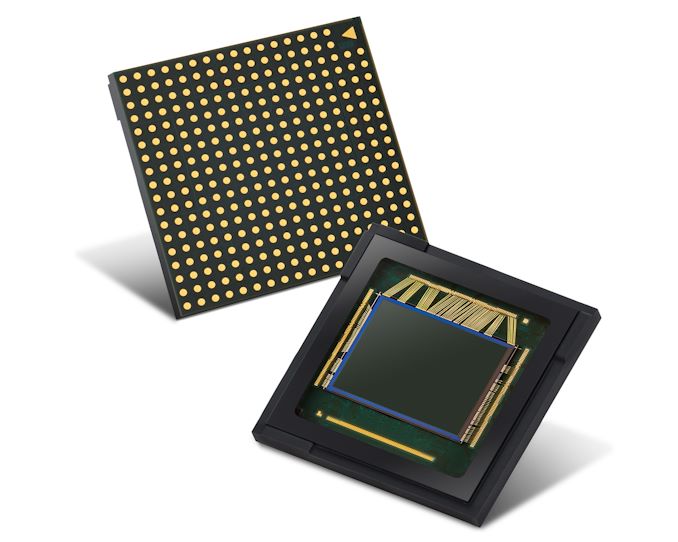

Samsung Announces New 50MP Dual-Pixel and Quad-Bayer ISOCELL Sensor

by Andrei Frumusanu on May 19, 2020 6:30 AM EST- Posted in

- Mobile

- Smartphones

- Sensor

- Samsung LSI

- Camera Sensors

- Samsung GN1

Samsung today has announced a brand-new flagship sensor in the form of the ISOCELL GN1. The new sensor is seemingly a follow-up to the 108MP ISOCELL HMX and HM1 sensors that we’ve seen employed by Xiaomi devices and the Galaxy S20 Ultra.

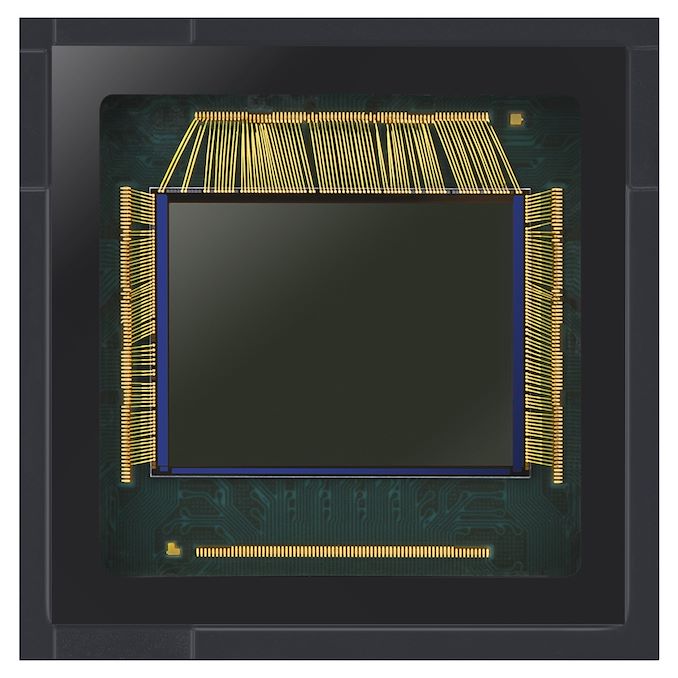

The big changes in the new GN1 is that it uses are different optical formula to the existing larger megapixel sensors. It’s only a bit less than half the resolution at a maximum of 50 megapixels, however the per-pixel pitch grows from 0.8µm to 1.2µm.

It seems that Samsung’s main rationale to grow to a larger pixel pitch wasn’t just the per-pixel increased light gathering ability, but rather the enablement to re-introduce full sensor dual-pixel phase-detection. The existing 108MP sensors that have been out in the market have been using 2x1 distributed on-chip lens phase detection autofocus pixels on the sensor, with the autofocus performance not been nearly as performant and a dual-pixel PD solution. In theory, the GN1 using dual-pixel PD pixels means that it uses pairs of 0.6µm x 1.2µm photo sites.

The GN1 is also the first sensor to pair the dual-pixel technology with a quad-bayer colour filter array, which Samsung calls Tetracell. In effect, the 50MP sensor will natively bin 2x2 pixels to produce a 12.5MP image with effective pixel pitches of 2.4µm. Interestingly, Samsung also talks about providing software algorithms to be able to produce 100MP images by using information from each individual photodiode – which all sounds like pixel-shift super resolution techniques.

The size of the sensor remains at a large 1/1.33” – in essence it’s most comparable to Huawei’s current 50MP sensor that is found in the P40 series phones – with the addition of Dual Pixel PD.

The sensor still supports 8K30 video recording, but we hope it’s able to achieve this with a smaller image crop compared to the current HM1 implementation in the S20 Ultra.

These new generation sensors are extremely challenging for vendors to properly use – besides the increased computational workload to actually use the full resolutions (the reduction from 108MP to 50MP will help there), there’s also the challenge of providing adequate optics that can take advantage of the resolution. It seems the GN1 here is a bit more reasonable in its demands, other than its sheer large size.

Related Reading:

Source: Samsung Newsroom

68 Comments

View All Comments

Psyside - Wednesday, May 20, 2020 - link

"Have you ever zoomed into a smartphone photo of this type of resolution? The quality to do so simply isn't there - everything looks like blotchy garbage"You have no clue do you? The s20 64mp looks amazing.

s.yu - Thursday, May 21, 2020 - link

Your definition of "amazing" is severely flawed.PaulHoule - Wednesday, May 20, 2020 - link

"Tack sharp" images that can be enlarged require getting quite a few things right: a narrow enough aperture for the depth of field to cover the target, enough light to be able to run the sensor at low light sensitive (high resolution) and still be able to use a short enough exposure that you don't get blur from camera shake or subject motion.That's why wedding photographers might have a $3000 lens and an external flash that weighs almost as much as the camera body. And it is part of the comparative advantage that L.A. has in the movie business since L.A. has more usable light for photography than any spot on earth except the sahara desert. (e.g. Tunisa, where some of the Star Wars movies were shot)

It takes more than just a sensor to get good results.

BedfordTim - Wednesday, May 20, 2020 - link

You have missed the point of 50MP and 108MP sensors. Both output a 12MP image. The sub-pixels are there for deBayering and single short HDR. Even though you have 4 or 9 sub-pixels per Bayer pixel you still get a much better result than with a single pixel.s.yu - Thursday, May 21, 2020 - link

Theoretically yes, but the 40-50MP sensors are still far from pixel-for-pixel 12MP a la Foveon X3(they should be very close on paper, since 12x4 is 48), I don't even think they match larger 12MP bayer in most ILCs, like the a7S series.And the 108MP that outputs 12MP in S20U is on par or slightly worse than the 40-50MP after accounting for processing differences.

Then again it's basically a moot point to entirely rule out software in mobile imaging solutions.

ChrisTexan - Wednesday, May 20, 2020 - link

In addition to raw resolution, the article clearly states one of the benefits, in this case this sensor is a step forward by stepping back in 2 regards:1. Larger pixel size - this means more light-gathering area which (all else equal) means either/both better low-light sensitivity (less noise in darker lighting) and faster/better speeds (less motion blur).

2. Aggregating pixels - as indicated clearly in the article, they are intending the option to combine multiple (2x2 arrays) of pixels as one "larger" pixel. Effectively, this gives it a "native" resolution of 12.5MP, but with 4x the light-gathering area (quadrupling the sensor capabilities for low-light and/or speed (ISO) capabilities, again multiplying (several times over) it's speed to shoot and/or lowering the noise threshold further.

The resulting picture therefore at 12.5MP in arrayed mode, can thus be MUCH more vivid potentially than a lower count. And compared to a "native" 50MP (or 108MP, etc), likely will have truer coloration, lower noise, basically a better picture "captured" by the sensor. AND the optical lensing requirements become less critical, as you aren't needing razor sharp clarity on each cell, you'll be receiving the average across 4 cells.

If shooting in "full pixel" mode, the tradeoffs will be, much more "detailed" pictures (for zooming in), at the expense of slower shooting (or higher motion blur risk), and much more "noise" at the pixel level.

In a cell phone, not sure how much of that matters, your point is valid about zooming in, although not for optical/sensor reasons. If the camera is saving the output as a lossy .jpg, ultimately, you will have color blocking and averaging, that when zoomed-in digitally, will make "max pixel res" pointless. If you could access the raw native pixel image, that would NOT be the case, but on cell phones that's really not an option (saving dozens of 250+MB images on a phone with 32GB total space, is obviously not going to be practical).

Bottom-line, the "50MB, with quad-arrayed pixels" output, on a cell-phone, is a GREAT application. The sensor itself, should capture wonderful images (optics-dependent), and although you'll end up with a JPEG output, it'll probably be the best 12.5MP JPEG output from a cell phone possible. You can't "digitally" improve a 50MB native image post-capture, as each sensor cell will have been processed to "optimize it individually", at best you can average the output data, and come up with a "similar" 4x4 matrix. But that won't match the clarity of the sensor-driven 4x4. So for both speed, and output, this is a great solution.

All my opinions, worth every penny that was paid for them.

ChrisTexan - Wednesday, May 20, 2020 - link

Sorry, I mispoke, 50MB divided into 4x4 array pixels is not 12.5MB, however, I would assume their algorithm will divide it into overlapping arrays (so row 1, 1,2 and row 2 1,2 are one array, then row 1 2,3 and row 2 2,3 are an array, etc)... just depends on capture algorithms as to the "native" output, non-overlapping would be like a 6.25MP output)willis936 - Tuesday, May 19, 2020 - link

Sensor fusion isn’t even tapped into yet, despite its demonstrated benefits in academia. The hardware is there, we already have 3 and 4 camera smartphones. Someday soon industry will wake up and actually properly implement sensor fusion. The rules based on old assumptions can be thrown out.Valantar - Wednesday, May 20, 2020 - link

Laboratory conditions rarely translate to the real world - it's much easier to develop systems like that for controlled condition than it is for real-world applications. There's no doubt that sensor fusion has promise for the future, but it'll still be a long, long time before we see flexible applications of it in the consumer space. The same goes for other promising tech like metamaterials, that has been "five years away" for a decade or more, and which has a realistic ETA for consumer uses better described in decades than in years.willis936 - Wednesday, May 20, 2020 - link

Sensor fusion is primarily algorithms. There is no magic materials to figure out how to mass produce, only an FPGA to develop then spin to ASIC once they’re happy with the design. It hasn’t been done because demand hasn’t incentivized cameras improving by a significant amount, so why waste the R&D funds in this area?