The Asus ROG Swift PG27UQ G-SYNC HDR Monitor Review: Gaming With All The Bells and Whistles

by Nate Oh on October 2, 2018 10:00 AM EST- Posted in

- Monitors

- Displays

- Asus

- NVIDIA

- G-Sync

- PG27UQ

- ROG Swift PG27UQ

- G-Sync HDR

When DisplayPort 1.4 Isn’t Enough: Chroma Subsampling

One of the key elements that even makes G-Sync HDR monitors possible – and yet still holds them back at the same time – is the amount of bandwidth available between a video card and a monitor. DisplayPort 1.3/1.4 increased this to just shy of 26Gbps of video data, which is a rather significant amount of data to send over a passive, two-meter cable. Still, the combination of high refresh rates, high bit depths, and HDR metadata pushes the bandwidth requirements much higher than DisplayPort 1.4 can handle.

All told, DisplayPort 1.4 was designed with just enough bandwidth to support 3840x2160 at 120Hz with 8bpc color, coming in at 25.81Gbps of 25.92Gbps of bandwidth. Notably, this isn’t enough bandwidth for any higher refresh rates, particularly not 144MHz. Meanwhile when using HDR paired with the P3 color space, where you’ll almost certainly want 10bpc color, there’s only enough bandwidth to drive it at 98Hz.

| DisplayPort Bandwidth | |||||||||||

| Standard | Raw | Effective | |||||||||

| DisplayPort 1.1 (HBR1) | 10.8Gbps | 8.64Gbps | |||||||||

| DisplayPort 1.2 (HBR2) | 21.8Gbps | 17.28Gbps | |||||||||

| DisplayPort 1.3/1.4 (HBR3) | 32.4Gbps | 25.92Gbps | |||||||||

As a result, for these first generation of monitors at least, NVIDIA has resorted to a couple of tricks to make a 144Hz 4K monitor work within the confines of current display technologies. Chief among these is support for chroma subsampling.

Chroma subsampling is a term that has become a little better known in the last few years, but the odds are most PC users have never really noticed the technique. In a nutshell, chroma subsampling is a means to reduce the amount of chroma (color) data in an image, allowing images and video data to either be stored in less space or transmitted over constrained links. I’ve seen it referred to compression at some points, and while the concept is indeed similar it’s important to note that chroma subsampling doesn’t try to recover lost color information nor does it even intelligently discard color information, so it’s perhaps thought better as a semi-graceful means of throwing out color data. In any case, the use of chroma subsampling is as old as color television, however its use in anything approaching mainstream monitors is much newer.

So how does chroma subsampling work? To understand chroma subsampling, it’s important to understand the Y'CbCr color space it operates on. As opposed to tried and true (and traditional) RGB – which stores the intensity of each color subpixel in a separate channel – Y'CbCr instead stores luma (light intensity) and chroma (color) separately. While the transformation process is not important, at the end of the day you have one channel of luma (Y) and two channels of color (CbCr), which add up to an image equivalent to RGB.

Chroma subsampling, in turn, is essentially a visual hack on the human visual system. Humans are more sensitive to luma than chroma, so as it goes, some chroma information can be discarded without significantly reducing the quality of an image.

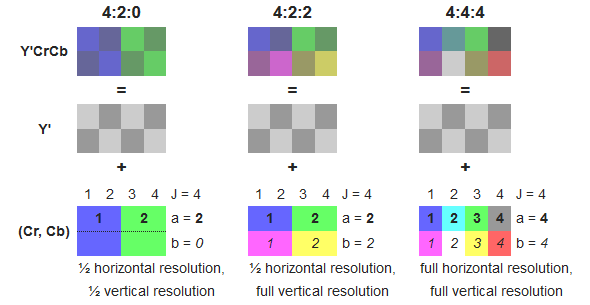

The technique covers a range of different patterns, but by far the most common patterns, in order of image quality, are 4:4:4, 4:2:2:, and 4:2:0. 4:4:4 is a full chroma image, equivalent to RGB. 4:2:2 is a half chroma image that discards half of the horizontal color information, and requires just 66% of the data as 4:4:4/RGB. Finally 4:2:0 is a quarter chroma image, which discards half of the horizontal and half of the vertical color information. In turn it achieves a full 50% reduction in the amount of data required versus 4:4:4/RGB.

Wikipedia: diagram on chroma subsampling

In the PC space, chroma subsampling is primarily used for storage purposes. JPEG employs various modes to save on space, and virtually every video you’ve ever seen, from YouTube to Blu-rays, has been encoded with 4:2:0 chroma. In practice chroma subsampling is bad for text because of the fine detail involved – which is why PCs don’t use it for desktop work – but for images it works remarkably well.

Getting back to the matter of G-Sync then, the same principle applies to bandwidth savings over the DisplayPort connection. If DP 1.4 can only deliver enough bandwidth to get to 98Hz with RGB/4:4:4 subsampling, then going down one level, to 4:2:2, can free up enough bandwidth to reach 144Hz.

Users, in turn, are given a choice between the two options. When using HDR they can either pick to stick with a 98Hz refresh rate and get full 4:4:4 subsampling, or drop to 4:2:2 for 144Hz.

In practice for desktop usage, most users are going to be running without HDR due to Windows’ shaky color management, so the issue is moot and they can run at 120Hz without any colorspace compromises. It’s in games and media playback where HDR will be used, and at that point the quality tradeoffs for 4:2:2 subsampling will be less obvious, or so NVIDIA’s reasoning goes. Adding an extra wrinkle, even on an RTX 2080 Ti few high-fidelity HDR-enabled games will be able to pass 98fps to begin with, so the higher refresh rate isn’t likely to be needed right now. Still, if you want HDR and access to 120Hz+ refresh rates – or SDR and 144Hz for that matter – then there are tradeoffs to be made.

On that note, it’s worth pointing out that to actually go past 120Hz, the current crop of G-Sync HDR monitors require overclocking. This appears to be a limitation of the panel itself; with 4:2:2 subsampling there’s enough bandwidth for 144Hz even with HDR, so it’s not another bandwidth limitation that’s stopping these monitors at 120Hz. Rather the purpose of overclocking is to push the panel above its specifications (something it seems plenty capable of doing), allowing the panel to catch up with the DisplayPort connection to drive the entire device at 144Hz.

Meanwhile on a quick tangent, I know a few people have asked why NVIDIA hasn’t used the VESA’s actual compression technology, Display Stream Compression (DSC). NVIDIA hasn’t officially commented on the matter, and I don’t really expect they will.

However from talking to other sources, DSC had something of a rough birth. The version of the DSC specification used in DP 1.4 lacked support for some features manufacturers wanted like 4:2:0 chroma subsampling, while DP1.4 itself lacked a clear definition of how Forward Error Correction would work with DSC. As a result, manufacturers have been holding off on supporting DSC. To that end, the VESA quietly released the DisplayPort 1.4a specification back in April to resolve the issue, with the latest standard essentially serving as the “production version” of DisplayPort with DSC. As a result, DSC implementation and adoption is just now taking off.

As NVIDIA controls the entire G-Sync HDR ecosystem, they aren’t necessarily reliant on common standards. None the less, if DSC wasn’t in good shape to use in 2016/2017 when G-Sync HDR was being developed, then it’s as good a reason as any that I’ve heard for why we’re not seeing G-Sync HDR using DSC.

91 Comments

View All Comments

imaheadcase - Tuesday, October 2, 2018 - link

3840x1600 is the dell i mean.Impulses - Tuesday, October 2, 2018 - link

The Acer Predator 32" has a similar panel as that BenQ and adds G-Sync tho still at a max 60Hz, not as well calibrated out of the box (and with a worse stand and controls) but it has dropped in price a couple times to the same as the BenQ... I've been cross shopping them for a while because 2 grand for a display whose features I may or may not be able to leverage in the next 3 years seems dubious.I wanted to go 32" too because the 27" 1440p doesn't seem like enough of a jump from my 24" 1920x1200 (being 16:10 it's nearly as tall as the 16:9 27"erd), and I had three of those which we occasionally used in Eyefinity mode (making a ~40" display). I've looked at 40-43" displays but they're all lacking compared to the smaller stuff (newer ones are all VA too, mostly Phillips and one Dell).

I use my PC for photo editing as much as PC gaming but I'm not a pro so a decent IPS screen that I can calibrate reasonably well would satisfy my photo needs.

Fallen Kell - Tuesday, October 2, 2018 - link

It is "almost" perfect. It is missing one of the most important things, HDMI 2.1, which has the bandwidth to actually feed the panel with what it is capable of doing (i.e. 4k HDR 4:4:4 120Hz). But we don't have that because this monitor was actually designed 3 years ago and only now finally coming to market, 6 months after HDMI 2.1 was released.lilkwarrior - Monday, October 8, 2018 - link

HDMI 2.1 certification is still not done; it would not have been able to call itself a HDMI 2.1 till probably late this year or next year.imaheadcase - Tuesday, October 2, 2018 - link

The 35 inch one has been canceled fyi. Asus rep told me when inquired about it just a week ago, unless in a week something has changed. Reason being panel is not perfect yet to mass produce.That said, its not a big loss, even if disappointing. Because HDR is silly tech so you can skip this generation

EAlbaek - Tuesday, October 2, 2018 - link

I bought one of these, just as they came out. Amazing display performance, but the in-built fan to cool the G-Sync HDR-module killed it for me.It's one of those noisy 40mm fans, which were otherwise banned from PC setups over a decade ago. It made more noise than the entirety of the rest of my 1080 Ti-SLI system combined. Like a wasp was loose in my room all the time. Completely unbearable to listen to.

I tried to return the monitor as RMA, as I thought that couldn't be right. But it could, said the retailer. At which point I chose to simply return the unit.

In my case, these things will have to wait, till nVidia makes a new G-Sync HDR module, which doesn't require active cooling. Plain and simple. I'm sort of guessing that'll fall in line with the availability of micro-LED displays. Which will hopefully also be much cheaper, than the ridiculously expensive FALD-panels in these monitors.

imaheadcase - Tuesday, October 2, 2018 - link

Can't you just replace the fan yourself? I read around the time of release someone simply removed fan and put own silent version on it.EAlbaek - Tuesday, October 2, 2018 - link

No idea - I shouldn't have to void the warranty on my $2000 monitor, to replace a 40mm fan.madwolfa - Tuesday, October 2, 2018 - link

Is that G-Sync HDR that requires active cooling or FALD array?EAlbaek - Tuesday, October 2, 2018 - link

It's the G-Sync HDR chip, apparantly.