The Asus ROG Swift PG27UQ G-SYNC HDR Monitor Review: Gaming With All The Bells and Whistles

by Nate Oh on October 2, 2018 10:00 AM EST- Posted in

- Monitors

- Displays

- Asus

- NVIDIA

- G-Sync

- PG27UQ

- ROG Swift PG27UQ

- G-Sync HDR

From G-Sync Variable Refresh To G-Sync HDR Gaming Experience

The original FreeSync and G-Sync were solutions to a specific and longstanding problem: fluctuating framerates would cause either screen tearing or, with V-Sync enabled, stutter/input lag. The result of VRR has been a considerably smoother experience in the 30 to 60 fps range. And an equally important benefit was compensating for dips and peaks over the wide ranges introduced with higher refresh rates like 144Hz. So they were very much tied to a single specification that directly described the experience, even if the numbers sometimes didn’t do the experience justice.

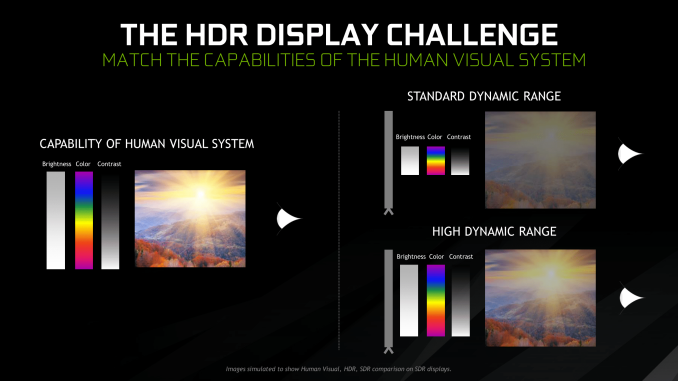

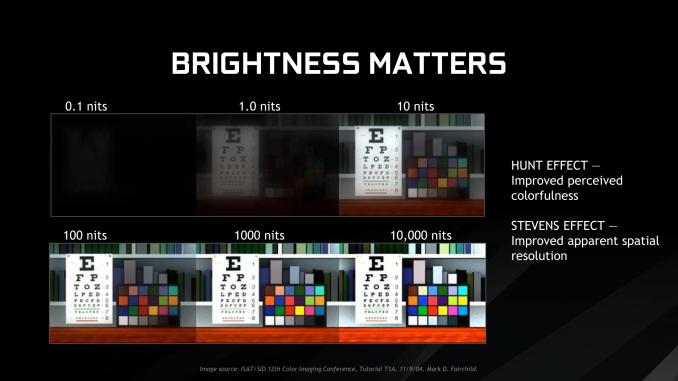

Meanwhile, HDR in terms of gaming is a whole suite of things that essentially allows for greater brightness, blacker darkness, and better/more colors. More importantly, this requires developer support for applications and production of HDR content. The end result is not nearly as static as VRR, as much depends on the game’s implementation – or in NVIDIA’s case, sometimes with Windows 10’s implementation. Done properly, even with simply better brightness, there can be perceived enhancements with colorfulness and spatial resolution, which are the Hunt effect and Stevens effect, respectively.

So we can see why both AMD and NVIDIA are pushing the idea of a ‘better gaming experience’, though NVIDIA is explicit about this with G-Sync HDR. The downside of this is that the required specifications for both FreeSync 2 and G-Sync HDR certifications are closed off and only discussed broadly, deferring to VESA’s DisplayHDR standards. Their situations, however, are very different. For AMD, their explanations are a little more open, and outside of HDR requirements, FreeSync 2 also has a lot to do with standardizing SDR VRR quality with mandated LFC, wider VRR range, and lower input lag. Otherwise, they’ve also stated that FreeSync 2’s color gamut, max brightness, and contrast ratio requirements are broadly comparable to those in DisplayHDR 600, though the HDR requirements do not overlap completely. And with FreeSync/FreeSync 2 support on Xbox One models and upcoming TVs, FreeSync 2 appears to be a more straightforward specification.

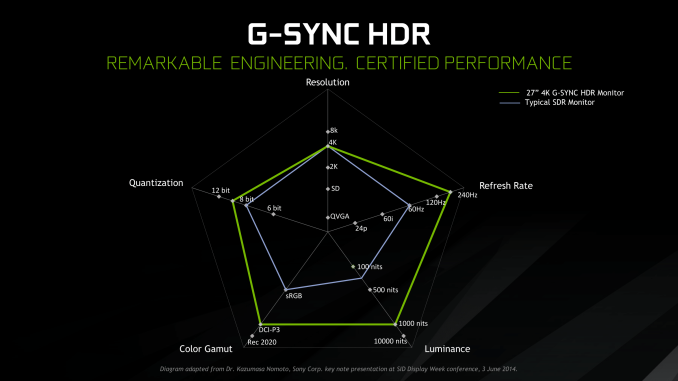

For NVIDIA, their push is much more general and holistic with respect to feature standards, and purely focused on the specific products. At the same time, they discussed the need for consumer education on the spectrum of HDR performance. While there are specific G-Sync HDR standards as part of their G-Sync certification process, those specifications are only known to NVIDIA and the manufacturers. Nor was much detail provided on minimum requirements outside of HDR10 support, peak 1000 nits brightness, and unspecified coverage of DCI-P3 for the 4K G-Sync HDR models, citing their certification process and deferring detailed capabilities to other certifications that G-Sync HDR monitors may have. In this case, UHD Alliance Premium and DisplayHDR 1000 certifications for the Asus PG27UQ. Which is to say that, at least for the moment, the only G-Sync HDR displays are those that adhere to some very stringent standards; there aren't any monitors under this moniker that offer limited color gamuts or subpar dynamic contrast ratios.

At least with UHD Premium, the certification is specific to 4K resolution, so while the announced 65” 4K 120Hz Big Format Gaming Displays almost surely will be, the 35” curved 3440 × 1440 200Hz models won’t. Practically-speaking, all the capabilities of these monitors are tied into the AU Optronics panels inside them, and we know that NVIDIA worked closely with AUO as well as the monitor manufacturers. As far as we know those AUO panels are only coupled with G-Sync HDR displays, and vice versa. No other standardized specification was disclosed, only referring back to their own certification process and the ‘ultimate gaming display’ ideal.

As much as NVIDIA mentioned consumer education on the HDR performance spectrum, the consumer is hardly any more educated on a monitor’s HDR capabilities with the G-Sync HDR branding. Detailed specifications are left to monitor certifications and manufacturers, which is the status quo. Without a specific G-Sync HDR page, NVIDIA lists G-Sync HDR features under the G-Sync page, and while those features are specified as G-Sync HDR, there is no explanation on the full differences between a G-Sync HDR monitor and a standard G-Sync monitor. The NVIDIA G-Sync HDR whitepaper is primarily background on HDR concepts and a handful of generalized G-Sync HDR details.

For all intents and purposes, G-Sync HDR is presented not as specification or technology but as branding for a premium product family, and right now for consumers it is more useful to think of it that way.

91 Comments

View All Comments

DanNeely - Tuesday, October 2, 2018 - link

Because I use the same system for gaming and general desktop use. My main display is my biggest and best monitor and thus used for both. At some hypothetical point in time if I had a pair of high end displays as my both my center and as one of my side displays having different ones as my gaming and desktop use might be an option. But because I'd still be using the other as a secondary display not switching off/absolutely ignoring it, I'd still probably want my main screen to be the center one for both roles so I'd have secondaries to either side; so I'd probably still want the same for both. If I were to end up with both a 4k display and an ultrawide - in which case the best one to game on would vary by title it might become a moot point. (Or I could go 4 screens with small ones on each side and 2 copies of my chat app open I suppose.)Impulses - Tuesday, October 2, 2018 - link

Still using the 32" Predator?edzieba - Tuesday, October 2, 2018 - link

"Why not get an equally capable OLED/QLED at a much bigger size for less money ?"Because there are no feature equivalent devices.

TVs do not actually accept an update rate of 120Hz, they will operate at 60Hz and either just do double-pulse backlighting or add their own internal interpolation. QLED 'HDR' desktop monitors lack the FALD backlight, so are not HDR monitors (just SDR panels that accept and compress a HDR signal).

wolrah - Tuesday, October 2, 2018 - link

A small subset of TVs actually do support native 120Hz inputs, but so far I've only seen that supported at 1080p due to HDMI bandwidth limitations.For a while it was just a few specific Sony models that supported proper 1080p120 but all the 2017/2018 LG OLEDs do as well as some of the higher end Vizios and a few others.

resiroth - Monday, October 8, 2018 - link

LG OLED TVs accept 120hz (and actually display true 120 FPS) but at a lower 1080p resolution. They also do 4K/60 of course. Not a great substitute though. If I were spending so much on a monitor I would demand it be oled though. Otherwise I’m spending 1500-1600 more than a 1440p monitor just to get 4K. I mean, cool? But why not go 2000-2500 more and get something actually unique, a 4K 144hz OLED HDR monitor that will be useful for 10 years or so.This thing will be obsolete the second oled monitors come out. There simply is no comparison.

Impulses - Tuesday, October 2, 2018 - link

Regardless of all the technical refresh rate limitations already pointed out, not everyone wants to go that big. 40" is already kinda huge for a desktop display; anything larger takes over the desk, makes it tough to have any side displays, and forces a lot more window management that's just not optimal for people that use their PC for anything but gaming.I'd rather have a 1440p 165Hz 27" & 4K 32" on moving arms even than a single 4K 50"+ display with a lower DPI than even my old 1920x1200 24"...

lilkwarrior - Monday, October 8, 2018 - link

To be fair, most would rather have a 4K ultra-wide (LG) or 1440p Ultrawide rather than multiple displays or a TV.5K is an exception since more room for controls for video work & etc is a good compromise for some to the productive convenience of more horizontal real estate an ultrawide provides.

Most enthusiasts are waiting for HDMI 2.1 to upgrade, so this monitor & current TVs this year are DOA.

milkod2001 - Tuesday, October 2, 2018 - link

This is nearly perfect. Still way overpriced what it is. I'd like to get similar but at 32'' size, 100Hz would be enough, don't need this fancy useless stand with holographic if price can be much cheaper, let it be factory calibrated, good enough for bit of Photoshop and also games. All at $1200 max. Wonder how long we have to wait for something like that.milkod2001 - Tuesday, October 2, 2018 - link

Forgot to mention it would obviously be 4k. The closest appears to be: BenQ PD3200U but it is only 60Hz monitor and 2017 model. Would want something newer and with 100Hz.imaheadcase - Tuesday, October 2, 2018 - link

To be fair, a mix of photoshop an also games at that screen res 60Hz would prob be enough since can't push modern games that high for MOST part. I have that Acer Z35P 120Hz monitor, and even with 1080Ti its hard pressed to get lots of games max it out. That is at 3440x1440.My 2nd "work" monitor next to it is the awesome Dell U3818DW (38 inches) @ 3840x1500 60Hz I actually prefer the dell to strategy games because of size, because FPS is not as huge concern.

But playing Pubg on the Acer 120Hz will get 80-90fps